Note

This tutorial describes a prototype feature. Prototype features are typically not available as part of binary distributions like PyPI or Conda, except sometimes behind run-time flags, and are at an early stage for feedback and testing.

Autoloading Out-of-Tree Extension

Created On: Oct 10, 2024 | Last Updated: Oct 10, 2024 | Last Verified: Oct 10, 2024

Author: Yuanhao Ji

The extension autoloading mechanism enables PyTorch to automatically load out-of-tree backend extensions without explicit import statements. This feature is beneficial for users as it enhances their experience and enables them to follow the familiar PyTorch device programming model without having to explicitly load or import device-specific extensions. Additionally, it facilitates effortless adoption of existing PyTorch applications with zero-code changes on out-of-tree devices. For further details, refer to the [RFC] Autoload Device Extension.

How to use out-of-tree extension autoloading in PyTorch

Review examples with Intel Gaudi HPU, Huawei Ascend NPU

PyTorch v2.5 or later

Note

This feature is enabled by default and can be disabled by using

export TORCH_DEVICE_BACKEND_AUTOLOAD=0.

If you get an error like this: “Failed to load the backend extension”,

this error is independent with PyTorch, you should disable this feature

and ask the out-of-tree extension maintainer for help.

How to apply this mechanism to out-of-tree extensions?

For instance, suppose you have a backend named foo and a corresponding package named torch_foo. Ensure that

your package is compatible with PyTorch 2.5 or later and includes the following snippet in its __init__.py file:

def _autoload():

print("Check things are working with `torch.foo.is_available()`.")

Then, the only thing you need to do is define an entry point within your Python package:

setup(

name="torch_foo",

version="1.0",

entry_points={

"torch.backends": [

"torch_foo = torch_foo:_autoload",

],

}

)

Now you can import the torch_foo module by simply adding the import torch statement without the need to add import torch_foo:

>>> import torch

Check things are working with `torch.foo.is_available()`.

>>> torch.foo.is_available()

True

In some cases, you might encounter issues with circular imports. The examples below demonstrate how you can address them.

Examples

In this example, we will be using Intel Gaudi HPU and Huawei Ascend NPU to determine how to integrate your out-of-tree extension with PyTorch using the autoloading feature.

habana_frameworks.torch is a Python package that enables users to run

PyTorch programs on Intel Gaudi by using the PyTorch HPU device key.

habana_frameworks.torch is a submodule of habana_frameworks, we add an entry point to

__autoload() in habana_frameworks/setup.py:

setup(

name="habana_frameworks",

version="2.5",

+ entry_points={

+ 'torch.backends': [

+ "device_backend = habana_frameworks:__autoload",

+ ],

+ }

)

In habana_frameworks/init.py, we use a global variable to track if our module has been loaded:

import os

is_loaded = False # A member variable of habana_frameworks module to track if our module has been imported

def __autoload():

# This is an entrypoint for pytorch autoload mechanism

# If the following condition is true, that means our backend has already been loaded, either explicitly

# or by the autoload mechanism and importing it again should be skipped to avoid circular imports

global is_loaded

if is_loaded:

return

import habana_frameworks.torch

In habana_frameworks/torch/init.py, we prevent circular imports by updating the state of the global variable:

import os

# This is to prevent torch autoload mechanism from causing circular imports

import habana_frameworks

habana_frameworks.is_loaded = True

torch_npu enables users to run PyTorch programs on Huawei Ascend NPU, it

leverages the PrivateUse1 device key and exposes the device name

as npu to the end users.

We define an entry point in torch_npu/setup.py:

setup(

name="torch_npu",

version="2.5",

+ entry_points={

+ 'torch.backends': [

+ 'torch_npu = torch_npu:_autoload',

+ ],

+ }

)

Unlike habana_frameworks, torch_npu uses the environment variable TORCH_DEVICE_BACKEND_AUTOLOAD

to control the autoloading process. For example, we set it to 0 to disable autoloading to prevent circular imports:

# Disable autoloading before running 'import torch'

os.environ['TORCH_DEVICE_BACKEND_AUTOLOAD'] = '0'

import torch

How it works

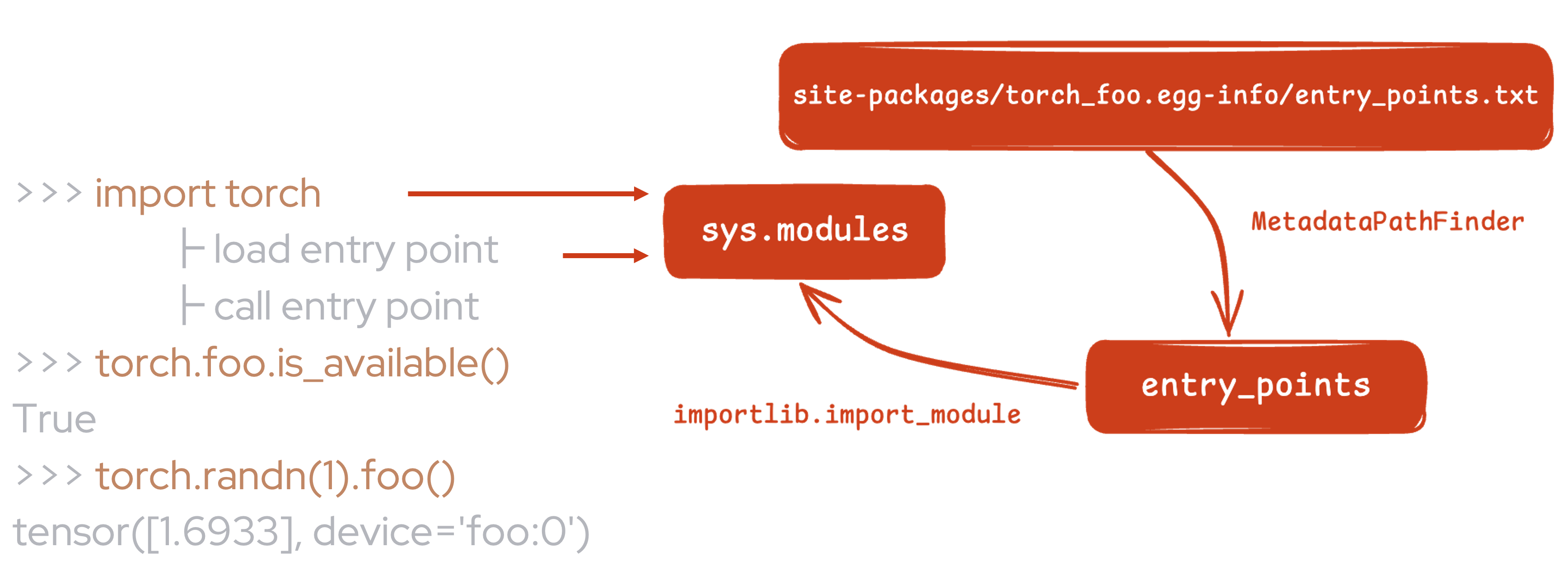

Autoloading is implemented based on Python’s Entrypoints

mechanism. We discover and load all of the specific entry points

in torch/__init__.py that are defined by out-of-tree extensions.

As shown above, after installing torch_foo, your Python module can be imported

when loading the entrypoint that you have defined, and then you can do some necessary work when

calling it.

See the implementation in this pull request: [RFC] Add support for device extension autoloading.

Conclusion

In this tutorial, we learned about the out-of-tree extension autoloading mechanism in PyTorch, which automatically loads backend extensions eliminating the need to add additional import statements. We also learned how to apply this mechanism to out-of-tree extensions by defining an entry point and how to prevent circular imports. We also reviewed an example on how to use the autoloading mechanism with Intel Gaudi HPU and Huawei Ascend NPU.