Note

Click here to download the full example code

Online ASR with Emformer RNN-T¶

Author: Jeff Hwang, Moto Hira

This tutorial shows how to use Emformer RNN-T and streaming API to perform online speech recognition.

Note

This tutorial requires FFmpeg libraries and SentencePiece.

Please refer to Optional Dependencies for the detail.

1. Overview¶

Performing online speech recognition is composed of the following steps

Build the inference pipeline Emformer RNN-T is composed of three components: feature extractor, decoder and token processor.

Format the waveform into chunks of expected sizes.

Pass data through the pipeline.

2. Preparation¶

import torch

import torchaudio

print(torch.__version__)

print(torchaudio.__version__)

import IPython

import matplotlib.pyplot as plt

from torchaudio.io import StreamReader

2.6.0.dev20241104

2.5.0.dev20241105

3. Construct the pipeline¶

Pre-trained model weights and related pipeline components are

bundled as torchaudio.pipelines.RNNTBundle.

We use torchaudio.pipelines.EMFORMER_RNNT_BASE_LIBRISPEECH,

which is a Emformer RNN-T model trained on LibriSpeech dataset.

bundle = torchaudio.pipelines.EMFORMER_RNNT_BASE_LIBRISPEECH

feature_extractor = bundle.get_streaming_feature_extractor()

decoder = bundle.get_decoder()

token_processor = bundle.get_token_processor()

0%| | 0.00/3.81k [00:00<?, ?B/s]

100%|##########| 3.81k/3.81k [00:00<00:00, 7.96MB/s]

0%| | 0.00/293M [00:00<?, ?B/s]

7%|7 | 20.9M/293M [00:00<00:01, 218MB/s]

14%|#4 | 41.8M/293M [00:00<00:01, 204MB/s]

34%|###3 | 99.1M/293M [00:00<00:00, 378MB/s]

53%|#####2 | 155M/293M [00:00<00:00, 457MB/s]

72%|#######2 | 212M/293M [00:00<00:00, 507MB/s]

89%|########9 | 261M/293M [00:00<00:00, 450MB/s]

100%|##########| 293M/293M [00:00<00:00, 433MB/s]

/pytorch/audio/src/torchaudio/pipelines/rnnt_pipeline.py:248: FutureWarning: You are using `torch.load` with `weights_only=False` (the current default value), which uses the default pickle module implicitly. It is possible to construct malicious pickle data which will execute arbitrary code during unpickling (See https://github.com/pytorch/pytorch/blob/main/SECURITY.md#untrusted-models for more details). In a future release, the default value for `weights_only` will be flipped to `True`. This limits the functions that could be executed during unpickling. Arbitrary objects will no longer be allowed to be loaded via this mode unless they are explicitly allowlisted by the user via `torch.serialization.add_safe_globals`. We recommend you start setting `weights_only=True` for any use case where you don't have full control of the loaded file. Please open an issue on GitHub for any issues related to this experimental feature.

state_dict = torch.load(path)

0%| | 0.00/295k [00:00<?, ?B/s]

100%|##########| 295k/295k [00:00<00:00, 63.8MB/s]

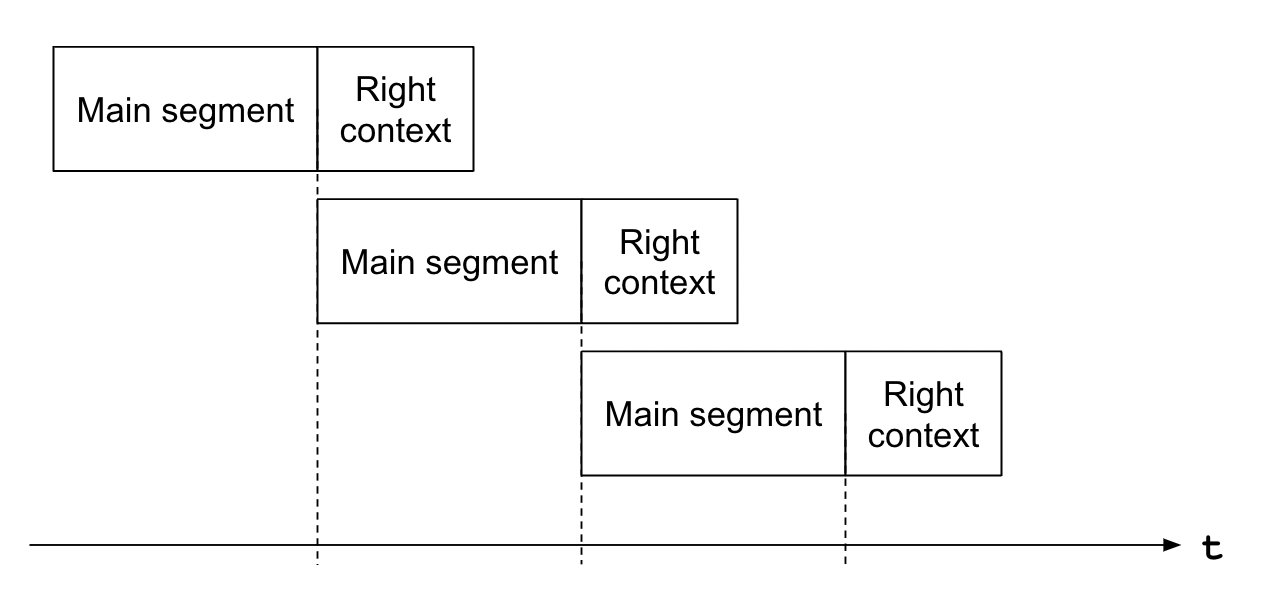

Streaming inference works on input data with overlap. Emformer RNN-T model treats the newest portion of the input data as the “right context” — a preview of future context. In each inference call, the model expects the main segment to start from this right context from the previous inference call. The following figure illustrates this.

The size of main segment and right context, along with the expected sample rate can be retrieved from bundle.

sample_rate = bundle.sample_rate

segment_length = bundle.segment_length * bundle.hop_length

context_length = bundle.right_context_length * bundle.hop_length

print(f"Sample rate: {sample_rate}")

print(f"Main segment: {segment_length} frames ({segment_length / sample_rate} seconds)")

print(f"Right context: {context_length} frames ({context_length / sample_rate} seconds)")

Sample rate: 16000

Main segment: 2560 frames (0.16 seconds)

Right context: 640 frames (0.04 seconds)

4. Configure the audio stream¶

Next, we configure the input audio stream using torchaudio.io.StreamReader.

For the detail of this API, please refer to the StreamReader Basic Usage.

The following audio file was originally published by LibriVox project, and it is in the public domain.

https://librivox.org/great-pirate-stories-by-joseph-lewis-french/

It was re-uploaded for the sake of the tutorial.

src = "https://download.pytorch.org/torchaudio/tutorial-assets/greatpiratestories_00_various.mp3"

streamer = StreamReader(src)

streamer.add_basic_audio_stream(frames_per_chunk=segment_length, sample_rate=bundle.sample_rate)

print(streamer.get_src_stream_info(0))

print(streamer.get_out_stream_info(0))

SourceAudioStream(media_type='audio', codec='mp3', codec_long_name='MP3 (MPEG audio layer 3)', format='fltp', bit_rate=128000, num_frames=0, bits_per_sample=0, metadata={}, sample_rate=44100.0, num_channels=2)

OutputAudioStream(source_index=0, filter_description='aresample=16000,aformat=sample_fmts=fltp', media_type='audio', format='fltp', sample_rate=16000.0, num_channels=2)

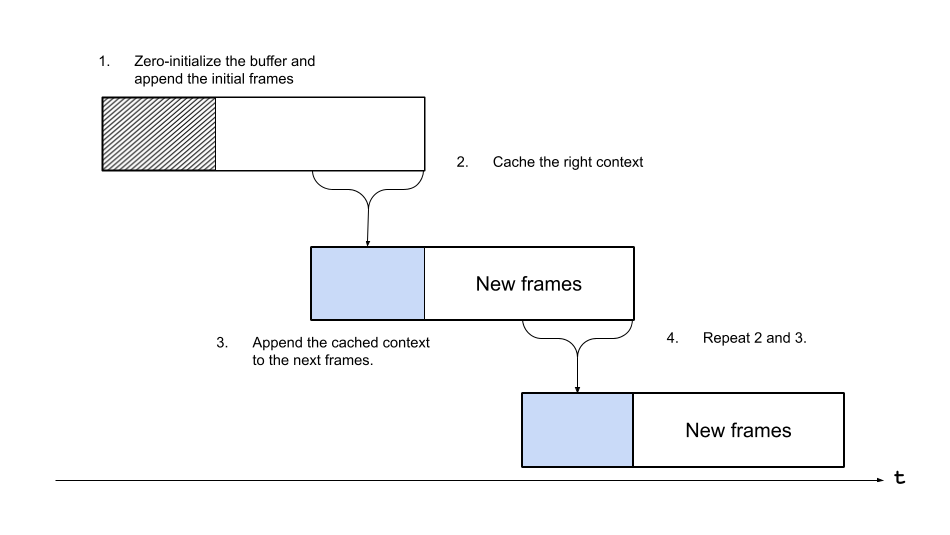

As previously explained, Emformer RNN-T model expects input data with overlaps; however, Streamer iterates the source media without overlap, so we make a helper structure that caches a part of input data from Streamer as right context and then appends it to the next input data from Streamer.

The following figure illustrates this.

class ContextCacher:

"""Cache the end of input data and prepend the next input data with it.

Args:

segment_length (int): The size of main segment.

If the incoming segment is shorter, then the segment is padded.

context_length (int): The size of the context, cached and appended.

"""

def __init__(self, segment_length: int, context_length: int):

self.segment_length = segment_length

self.context_length = context_length

self.context = torch.zeros([context_length])

def __call__(self, chunk: torch.Tensor):

if chunk.size(0) < self.segment_length:

chunk = torch.nn.functional.pad(chunk, (0, self.segment_length - chunk.size(0)))

chunk_with_context = torch.cat((self.context, chunk))

self.context = chunk[-self.context_length :]

return chunk_with_context

5. Run stream inference¶

Finally, we run the recognition.

First, we initialize the stream iterator, context cacher, and state and hypothesis that are used by decoder to carry over the decoding state between inference calls.

cacher = ContextCacher(segment_length, context_length)

state, hypothesis = None, None

Next we, run the inference.

For the sake of better display, we create a helper function which processes the source stream up to the given times and call it repeatedly.

stream_iterator = streamer.stream()

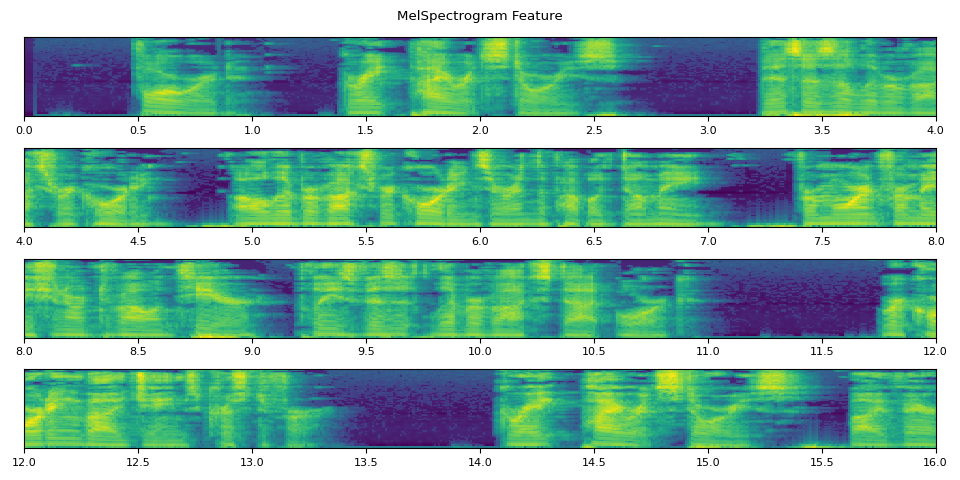

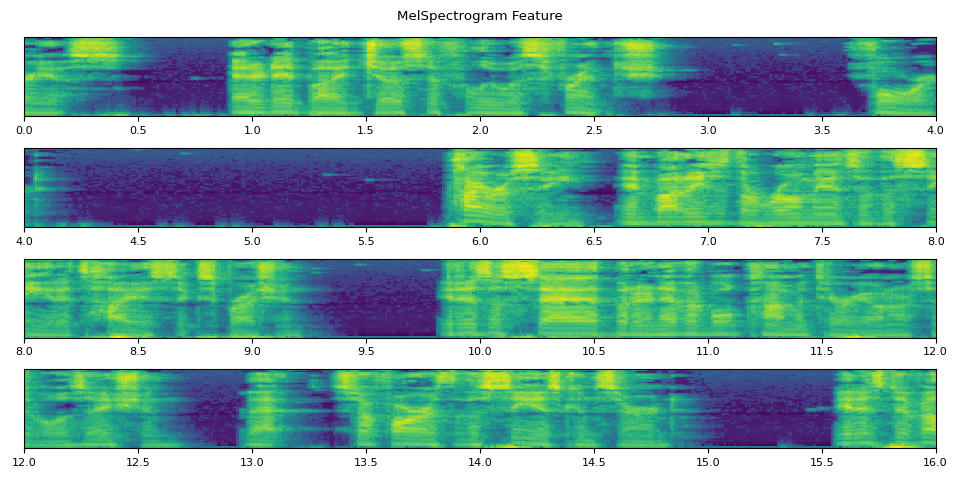

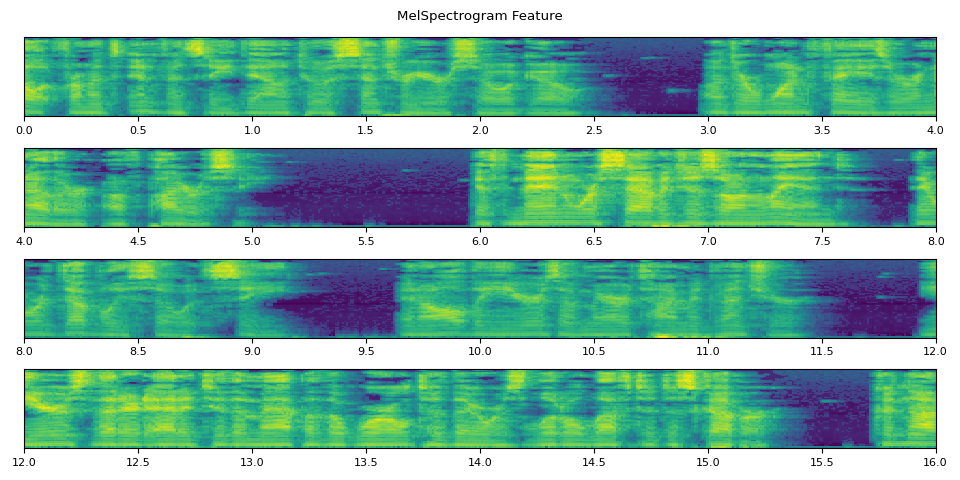

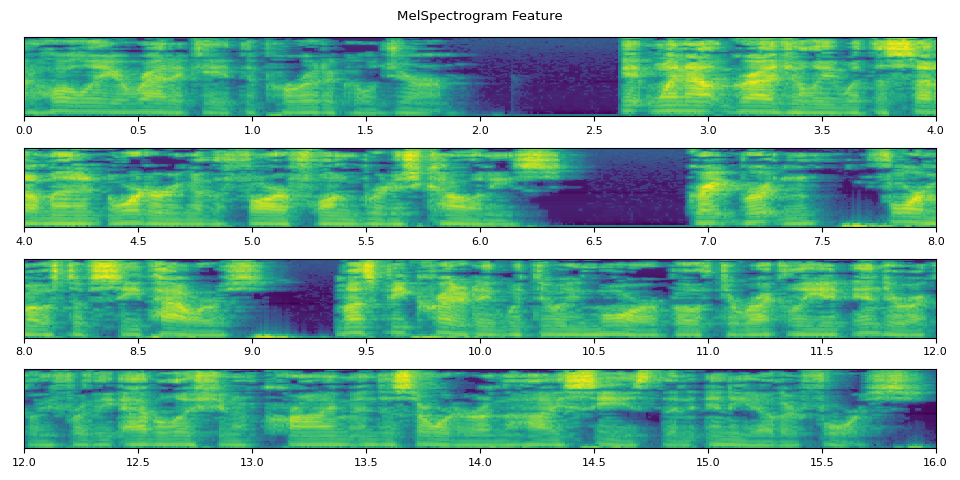

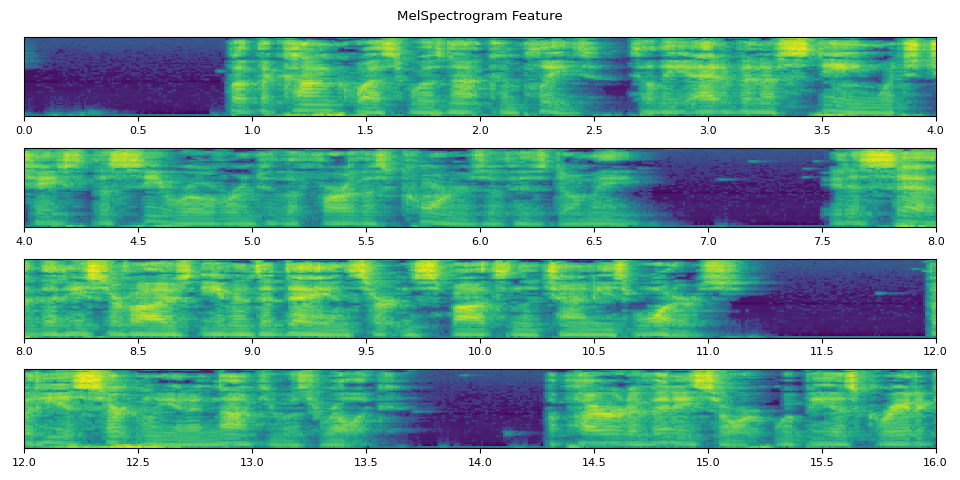

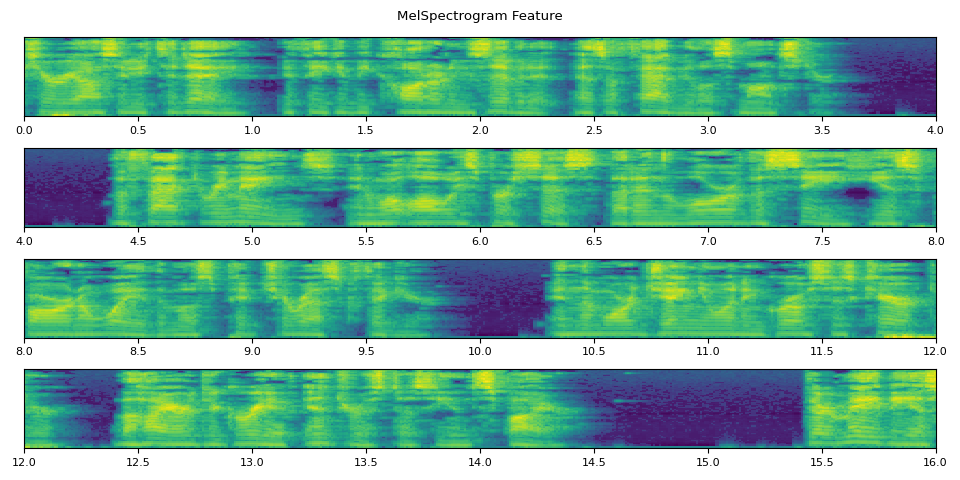

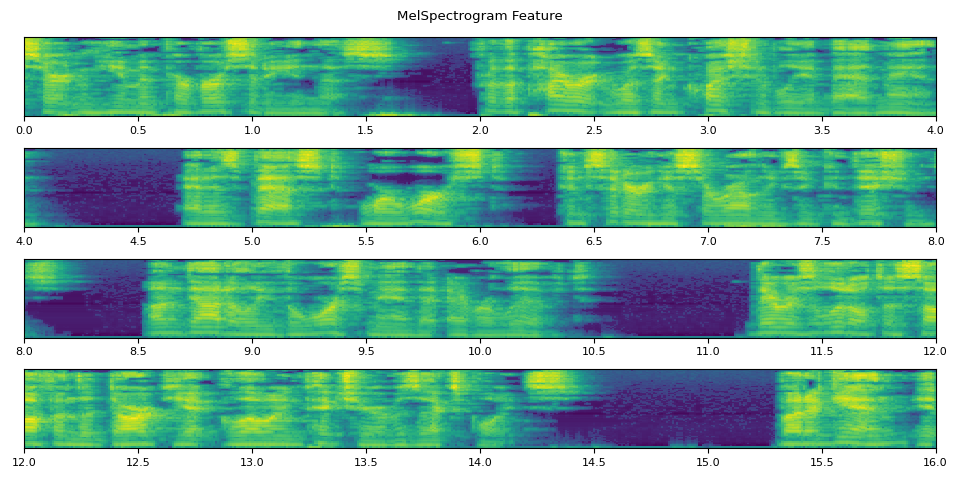

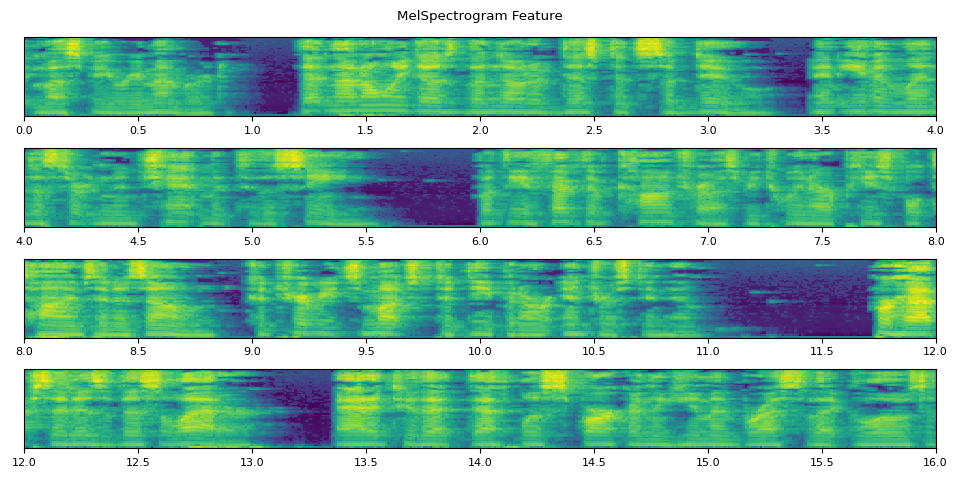

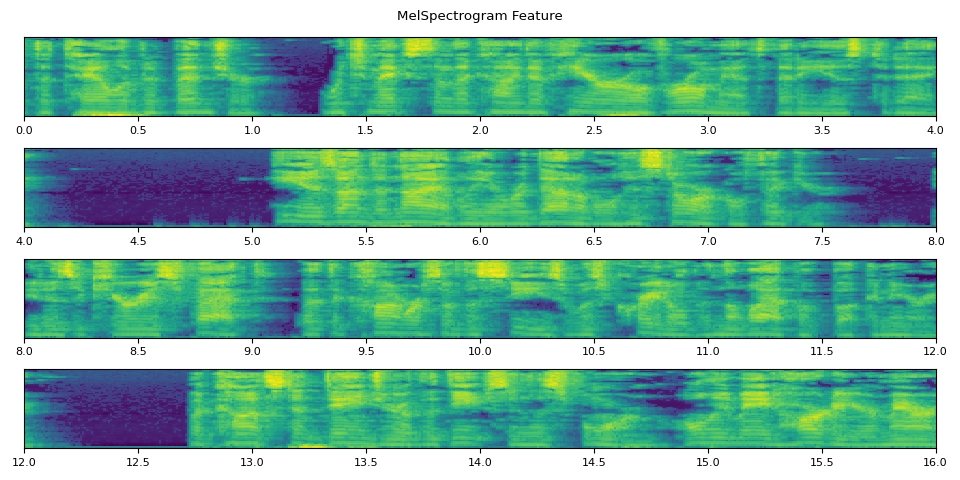

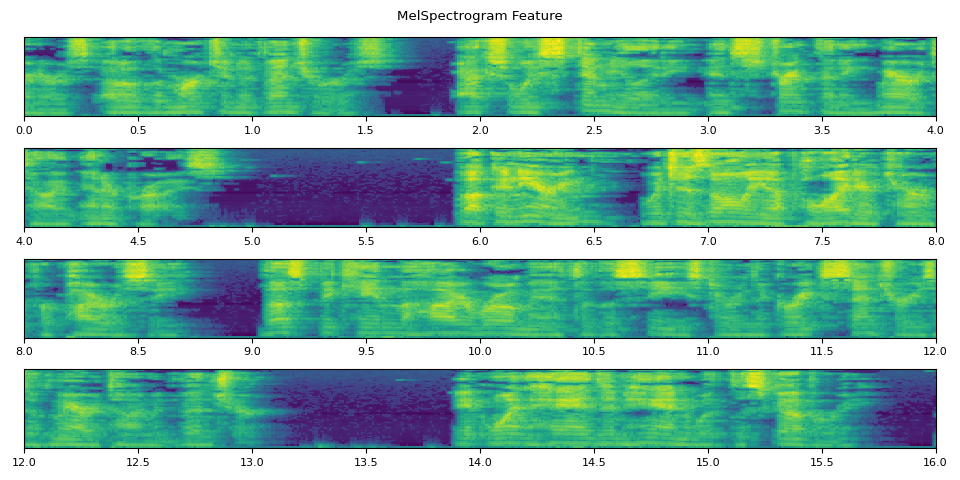

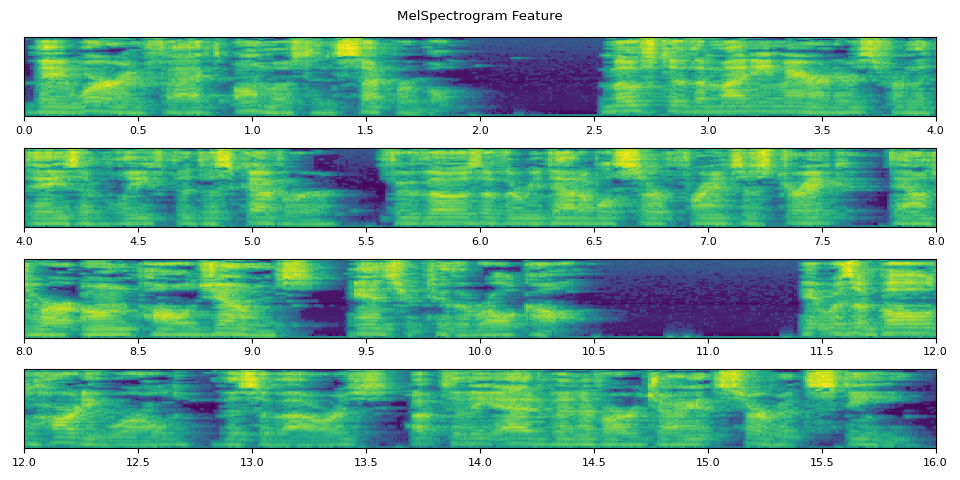

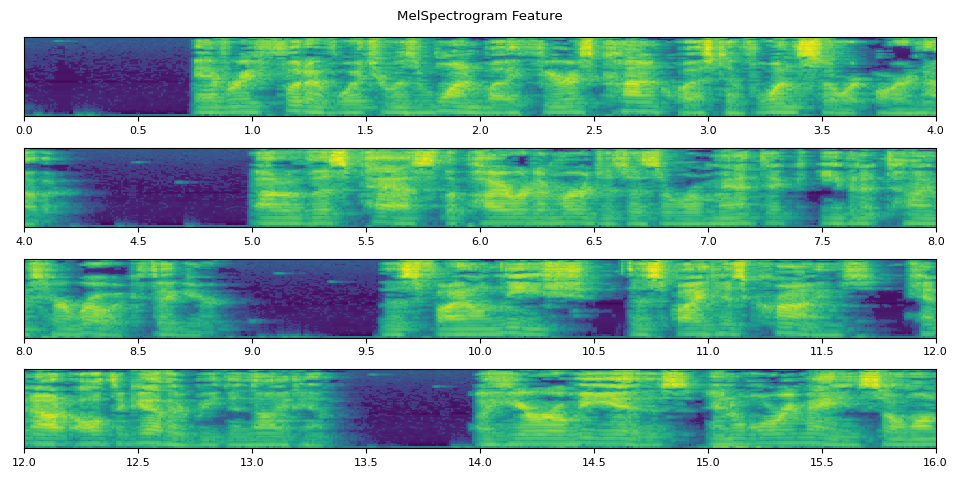

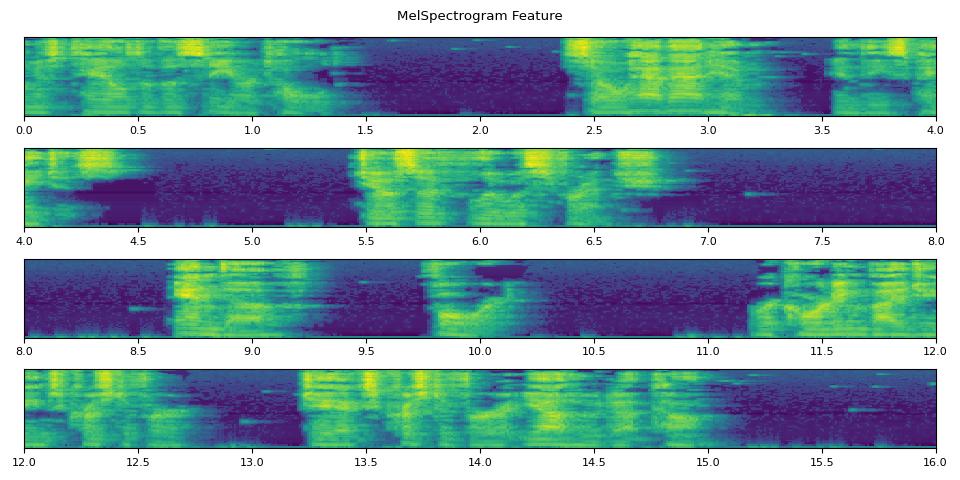

def _plot(feats, num_iter, unit=25):

unit_dur = segment_length / sample_rate * unit

num_plots = num_iter // unit + (1 if num_iter % unit else 0)

fig, axes = plt.subplots(num_plots, 1)

t0 = 0

for i, ax in enumerate(axes):

feats_ = feats[i * unit : (i + 1) * unit]

t1 = t0 + segment_length / sample_rate * len(feats_)

feats_ = torch.cat([f[2:-2] for f in feats_]) # remove boundary effect and overlap

ax.imshow(feats_.T, extent=[t0, t1, 0, 1], aspect="auto", origin="lower")

ax.tick_params(which="both", left=False, labelleft=False)

ax.set_xlim(t0, t0 + unit_dur)

t0 = t1

fig.suptitle("MelSpectrogram Feature")

plt.tight_layout()

@torch.inference_mode()

def run_inference(num_iter=100):

global state, hypothesis

chunks = []

feats = []

for i, (chunk,) in enumerate(stream_iterator, start=1):

segment = cacher(chunk[:, 0])

features, length = feature_extractor(segment)

hypos, state = decoder.infer(features, length, 10, state=state, hypothesis=hypothesis)

hypothesis = hypos

transcript = token_processor(hypos[0][0], lstrip=False)

print(transcript, end="\r", flush=True)

chunks.append(chunk)

feats.append(features)

if i == num_iter:

break

# Plot the features

_plot(feats, num_iter)

return IPython.display.Audio(torch.cat(chunks).T.numpy(), rate=bundle.sample_rate)

run_inference()

forward

forward

forward

forward

forward

forward great

forward great pir

forward great pirate

forward great pirate

forward great pirate stories

forward great pirate stories

forward great pirate stories

forward great pirate stories

forward great pirate stories

forward great pirate stories

forward great pirate stories

forward great pirate stories this

forward great pirate stories this is

forward great pirate stories this is a

forward great pirate stories this is a liber

forward great pirate stories this is a liberal

forward great pirate stories this is a liberal

forward great pirate stories this is a liberal record

forward great pirate stories this is a liberal record

forward great pirate stories this is a liberal recording

forward great pirate stories this is a liberal recording

forward great pirate stories this is a liberal recording all

forward great pirate stories this is a liberal recording all the

forward great pirate stories this is a liberal recording all the

forward great pirate stories this is a liberal recording all the votes

forward great pirate stories this is a liberal recording all the votes record

forward great pirate stories this is a liberal recording all the votes record

forward great pirate stories this is a liberal recording all the votes recordings

forward great pirate stories this is a liberal recording all the votes recordings are

forward great pirate stories this is a liberal recording all the votes recordings are in the

forward great pirate stories this is a liberal recording all the votes recordings are in the public

forward great pirate stories this is a liberal recording all the votes recordings are in the public

forward great pirate stories this is a liberal recording all the votes recordings are in the public dum

forward great pirate stories this is a liberal recording all the votes recordings are in the public dum

forward great pirate stories this is a liberal recording all the votes recordings are in the public duma

forward great pirate stories this is a liberal recording all the votes recordings are in the public duma

forward great pirate stories this is a liberal recording all the votes recordings are in the public duma

forward great pirate stories this is a liberal recording all the votes recordings are in the public duma for more

forward great pirate stories this is a liberal recording all the votes recordings are in the public duma for more

forward great pirate stories this is a liberal recording all the votes recordings are in the public duma for more information

forward great pirate stories this is a liberal recording all the votes recordings are in the public duma for more information

forward great pirate stories this is a liberal recording all the votes recordings are in the public duma for more information

forward great pirate stories this is a liberal recording all the votes recordings are in the public duma for more information or

forward great pirate stories this is a liberal recording all the votes recordings are in the public duma for more information or develop

forward great pirate stories this is a liberal recording all the votes recordings are in the public duma for more information or to volunt

forward great pirate stories this is a liberal recording all the votes recordings are in the public duma for more information or to volunteer

forward great pirate stories this is a liberal recording all the votes recordings are in the public duma for more information or to volunteer

forward great pirate stories this is a liberal recording all the votes recordings are in the public duma for more information or to volunteer

forward great pirate stories this is a liberal recording all the votes recordings are in the public duma for more information or to volunteer please

forward great pirate stories this is a liberal recording all the votes recordings are in the public duma for more information or to volunteer please

forward great pirate stories this is a liberal recording all the votes recordings are in the public duma for more information or to volunteer please visit

forward great pirate stories this is a liberal recording all the votes recordings are in the public duma for more information or to volunteer please visit

forward great pirate stories this is a liberal recording all the votes recordings are in the public duma for more information or to volunteer please visit liber

forward great pirate stories this is a liberal recording all the votes recordings are in the public duma for more information or to volunteer please visit liber

forward great pirate stories this is a liberal recording all the votes recordings are in the public duma for more information or to volunteer please visit liberal

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work record

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christ

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pir

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories

run_inference()

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by jose

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lew

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french for

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french for

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard pir

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard pir

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy em

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the rom

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but ine

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inev

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable com

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civil

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civil

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilis

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concer

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concer

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it

run_inference()

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is develop

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its inf

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of pir

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of pir

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of piracy

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of piracy

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of piracy

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of piracy

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of piracy

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of piracy

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of piracy if

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of piracy if

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of piracy if men

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of piracy if men

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of piracy if men were

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of piracy if men were sav

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of piracy if men were savages

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of piracy if men were savages

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of piracy if men were savages on

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of piracy if men were savages on land

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of piracy if men were savages on land

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of piracy if men were savages on land

forward great pirate stories this is a liberal recording all the votes recordings are in the public dummy for more information or to volunteer please visit liberal stout work recording by james christopher great pirate stories by various edited by joseph lewis french forard piracy embodies the romance of the sea in its highest expression it is a sad but inevitable commentary on our civilisation that so far as the sea is concerned it is developed from its infancy down to a century or so ago under one phase or another of piracy if men were savages on land