Note

Click here to download the full example code

ASR Inference with CTC Decoder

Author: Caroline Chen

This tutorial shows how to perform speech recognition inference using a CTC beam search decoder with lexicon constraint and KenLM language model support. We demonstrate this on a pretrained wav2vec 2.0 model trained using CTC loss.

Overview

Beam search decoding works by iteratively expanding text hypotheses (beams) with next possible characters, and maintaining only the hypotheses with the highest scores at each time step. A language model can be incorporated into the scoring computation, and adding a lexicon constraint restricts the next possible tokens for the hypotheses so that only words from the lexicon can be generated.

The underlying implementation is ported from Flashlight’s beam search decoder. A mathematical formula for the decoder optimization can be found in the Wav2Letter paper, and a more detailed algorithm can be found in this blog.

Running ASR inference using a CTC Beam Search decoder with a language model and lexicon constraint requires the following components

Acoustic Model: model predicting phonetics from audio waveforms

Tokens: the possible predicted tokens from the acoustic model

Lexicon: mapping between possible words and their corresponding tokens sequence

Language Model (LM): n-gram language model trained with the KenLM library, or custom language model that inherits

CTCDecoderLM

Acoustic Model and Set Up

First we import the necessary utilities and fetch the data that we are working with

import torch

import torchaudio

print(torch.__version__)

print(torchaudio.__version__)

2.0.0

2.0.1

import time

from typing import List

import IPython

import matplotlib.pyplot as plt

from torchaudio.models.decoder import ctc_decoder

from torchaudio.utils import download_asset

We use the pretrained Wav2Vec 2.0

Base model that is finetuned on 10 min of the LibriSpeech

dataset, which can be loaded in using

torchaudio.pipelines.WAV2VEC2_ASR_BASE_10M.

For more detail on running Wav2Vec 2.0 speech

recognition pipelines in torchaudio, please refer to this

tutorial.

bundle = torchaudio.pipelines.WAV2VEC2_ASR_BASE_10M

acoustic_model = bundle.get_model()

Downloading: "https://download.pytorch.org/torchaudio/models/wav2vec2_fairseq_base_ls960_asr_ll10m.pth" to /root/.cache/torch/hub/checkpoints/wav2vec2_fairseq_base_ls960_asr_ll10m.pth

0%| | 0.00/360M [00:00<?, ?B/s]

11%|#1 | 41.3M/360M [00:00<00:00, 433MB/s]

23%|##2 | 82.6M/360M [00:00<00:00, 398MB/s]

35%|###4 | 125M/360M [00:00<00:00, 420MB/s]

47%|####7 | 169M/360M [00:00<00:00, 436MB/s]

59%|#####8 | 212M/360M [00:00<00:00, 441MB/s]

71%|####### | 255M/360M [00:00<00:00, 441MB/s]

83%|########2 | 298M/360M [00:00<00:00, 447MB/s]

95%|#########4| 341M/360M [00:00<00:00, 448MB/s]

100%|##########| 360M/360M [00:00<00:00, 400MB/s]

We will load a sample from the LibriSpeech test-other dataset.

speech_file = download_asset("tutorial-assets/ctc-decoding/1688-142285-0007.wav")

IPython.display.Audio(speech_file)

0%| | 0.00/441k [00:00<?, ?B/s]

100%|##########| 441k/441k [00:00<00:00, 124MB/s]

The transcript corresponding to this audio file is

waveform, sample_rate = torchaudio.load(speech_file)

if sample_rate != bundle.sample_rate:

waveform = torchaudio.functional.resample(waveform, sample_rate, bundle.sample_rate)

Files and Data for Decoder

Next, we load in our token, lexicon, and language model data, which are used by the decoder to predict words from the acoustic model output. Pretrained files for the LibriSpeech dataset can be downloaded through torchaudio, or the user can provide their own files.

Tokens

The tokens are the possible symbols that the acoustic model can predict, including the blank and silent symbols. It can either be passed in as a file, where each line consists of the tokens corresponding to the same index, or as a list of tokens, each mapping to a unique index.

# tokens.txt

_

|

e

t

...

['-', '|', 'e', 't', 'a', 'o', 'n', 'i', 'h', 's', 'r', 'd', 'l', 'u', 'm', 'w', 'c', 'f', 'g', 'y', 'p', 'b', 'v', 'k', "'", 'x', 'j', 'q', 'z']

Lexicon

The lexicon is a mapping from words to their corresponding tokens sequence, and is used to restrict the search space of the decoder to only words from the lexicon. The expected format of the lexicon file is a line per word, with a word followed by its space-split tokens.

# lexcion.txt

a a |

able a b l e |

about a b o u t |

...

...

Language Model

A language model can be used in decoding to improve the results, by factoring in a language model score that represents the likelihood of the sequence into the beam search computation. Below, we outline the different forms of language models that are supported for decoding.

No Language Model

To create a decoder instance without a language model, set lm=None when initializing the decoder.

KenLM

This is an n-gram language model trained with the KenLM

library. Both the .arpa or

the binarized .bin LM can be used, but the binary format is

recommended for faster loading.

The language model used in this tutorial is a 4-gram KenLM trained using LibriSpeech.

Custom Language Model

Users can define their own custom language model in Python, whether

it be a statistical or neural network language model, using

CTCDecoderLM and

CTCDecoderLMState.

For instance, the following code creates a basic wrapper around a PyTorch

torch.nn.Module language model.

from torchaudio.models.decoder import CTCDecoderLM, CTCDecoderLMState

class CustomLM(CTCDecoderLM):

"""Create a Python wrapper around `language_model` to feed to the decoder."""

def __init__(self, language_model: torch.nn.Module):

CTCDecoderLM.__init__(self)

self.language_model = language_model

self.sil = -1 # index for silent token in the language model

self.states = {}

language_model.eval()

def start(self, start_with_nothing: bool = False):

state = CTCDecoderLMState()

with torch.no_grad():

score = self.language_model(self.sil)

self.states[state] = score

return state

def score(self, state: CTCDecoderLMState, token_index: int):

outstate = state.child(token_index)

if outstate not in self.states:

score = self.language_model(token_index)

self.states[outstate] = score

score = self.states[outstate]

return outstate, score

def finish(self, state: CTCDecoderLMState):

return self.score(state, self.sil)

Downloading Pretrained Files

Pretrained files for the LibriSpeech dataset can be downloaded using

download_pretrained_files().

Note: this cell may take a couple of minutes to run, as the language model can be large

from torchaudio.models.decoder import download_pretrained_files

files = download_pretrained_files("librispeech-4-gram")

print(files)

0%| | 0.00/4.97M [00:00<?, ?B/s]

100%|##########| 4.97M/4.97M [00:00<00:00, 376MB/s]

0%| | 0.00/57.0 [00:00<?, ?B/s]

100%|##########| 57.0/57.0 [00:00<00:00, 57.7kB/s]

0%| | 0.00/2.91G [00:00<?, ?B/s]

1%|1 | 37.9M/2.91G [00:00<00:07, 398MB/s]

3%|2 | 75.9M/2.91G [00:00<00:08, 380MB/s]

4%|4 | 119M/2.91G [00:00<00:07, 414MB/s]

5%|5 | 163M/2.91G [00:00<00:06, 431MB/s]

7%|6 | 206M/2.91G [00:00<00:06, 436MB/s]

8%|8 | 249M/2.91G [00:00<00:06, 443MB/s]

10%|9 | 291M/2.91G [00:00<00:06, 419MB/s]

11%|#1 | 334M/2.91G [00:00<00:06, 428MB/s]

13%|#2 | 376M/2.91G [00:00<00:06, 432MB/s]

14%|#4 | 417M/2.91G [00:01<00:06, 418MB/s]

15%|#5 | 461M/2.91G [00:01<00:06, 430MB/s]

17%|#6 | 502M/2.91G [00:01<00:06, 396MB/s]

18%|#8 | 541M/2.91G [00:01<00:06, 395MB/s]

19%|#9 | 581M/2.91G [00:01<00:06, 403MB/s]

21%|## | 621M/2.91G [00:01<00:06, 409MB/s]

22%|##2 | 661M/2.91G [00:01<00:05, 410MB/s]

23%|##3 | 700M/2.91G [00:01<00:05, 403MB/s]

25%|##4 | 740M/2.91G [00:01<00:05, 408MB/s]

26%|##6 | 779M/2.91G [00:01<00:05, 408MB/s]

27%|##7 | 818M/2.91G [00:02<00:05, 408MB/s]

29%|##8 | 861M/2.91G [00:02<00:05, 418MB/s]

30%|### | 903M/2.91G [00:02<00:05, 427MB/s]

32%|###1 | 946M/2.91G [00:02<00:04, 435MB/s]

33%|###3 | 988M/2.91G [00:02<00:04, 434MB/s]

35%|###4 | 1.01G/2.91G [00:02<00:04, 431MB/s]

36%|###5 | 1.05G/2.91G [00:02<00:04, 422MB/s]

37%|###7 | 1.09G/2.91G [00:02<00:04, 429MB/s]

39%|###8 | 1.13G/2.91G [00:02<00:04, 434MB/s]

40%|#### | 1.17G/2.91G [00:02<00:04, 433MB/s]

42%|####1 | 1.21G/2.91G [00:03<00:04, 432MB/s]

43%|####2 | 1.25G/2.91G [00:03<00:04, 430MB/s]

44%|####4 | 1.29G/2.91G [00:03<00:04, 412MB/s]

46%|####5 | 1.33G/2.91G [00:03<00:04, 422MB/s]

47%|####7 | 1.37G/2.91G [00:03<00:03, 431MB/s]

49%|####8 | 1.42G/2.91G [00:03<00:03, 437MB/s]

50%|##### | 1.46G/2.91G [00:03<00:03, 439MB/s]

51%|#####1 | 1.50G/2.91G [00:03<00:03, 442MB/s]

53%|#####2 | 1.54G/2.91G [00:03<00:03, 442MB/s]

54%|#####4 | 1.58G/2.91G [00:04<00:03, 443MB/s]

56%|#####5 | 1.62G/2.91G [00:04<00:03, 445MB/s]

57%|#####7 | 1.66G/2.91G [00:04<00:03, 441MB/s]

59%|#####8 | 1.71G/2.91G [00:04<00:02, 442MB/s]

60%|###### | 1.75G/2.91G [00:04<00:02, 442MB/s]

61%|######1 | 1.79G/2.91G [00:04<00:02, 443MB/s]

63%|######2 | 1.83G/2.91G [00:04<00:02, 442MB/s]

64%|######4 | 1.87G/2.91G [00:04<00:02, 444MB/s]

66%|######5 | 1.91G/2.91G [00:04<00:02, 446MB/s]

67%|######7 | 1.96G/2.91G [00:04<00:02, 449MB/s]

69%|######8 | 2.00G/2.91G [00:05<00:02, 450MB/s]

70%|####### | 2.04G/2.91G [00:05<00:02, 450MB/s]

72%|#######1 | 2.08G/2.91G [00:05<00:01, 450MB/s]

73%|#######2 | 2.12G/2.91G [00:05<00:01, 450MB/s]

74%|#######4 | 2.17G/2.91G [00:05<00:01, 450MB/s]

76%|#######5 | 2.21G/2.91G [00:05<00:01, 440MB/s]

77%|#######7 | 2.25G/2.91G [00:05<00:01, 438MB/s]

79%|#######8 | 2.29G/2.91G [00:05<00:01, 441MB/s]

80%|######## | 2.33G/2.91G [00:05<00:01, 443MB/s]

82%|########1 | 2.37G/2.91G [00:05<00:01, 445MB/s]

83%|########3 | 2.42G/2.91G [00:06<00:01, 445MB/s]

84%|########4 | 2.46G/2.91G [00:06<00:01, 447MB/s]

86%|########5 | 2.50G/2.91G [00:06<00:01, 400MB/s]

87%|########7 | 2.54G/2.91G [00:06<00:00, 408MB/s]

89%|########8 | 2.58G/2.91G [00:06<00:00, 420MB/s]

90%|######### | 2.62G/2.91G [00:06<00:00, 428MB/s]

92%|#########1| 2.66G/2.91G [00:06<00:00, 434MB/s]

93%|#########3| 2.71G/2.91G [00:06<00:00, 439MB/s]

94%|#########4| 2.75G/2.91G [00:06<00:00, 436MB/s]

96%|#########5| 2.79G/2.91G [00:06<00:00, 441MB/s]

97%|#########7| 2.83G/2.91G [00:07<00:00, 444MB/s]

99%|#########8| 2.87G/2.91G [00:07<00:00, 444MB/s]

100%|##########| 2.91G/2.91G [00:07<00:00, 431MB/s]

PretrainedFiles(lexicon='/root/.cache/torch/hub/torchaudio/decoder-assets/librispeech-4-gram/lexicon.txt', tokens='/root/.cache/torch/hub/torchaudio/decoder-assets/librispeech-4-gram/tokens.txt', lm='/root/.cache/torch/hub/torchaudio/decoder-assets/librispeech-4-gram/lm.bin')

Construct Decoders

In this tutorial, we construct both a beam search decoder and a greedy decoder for comparison.

Beam Search Decoder

The decoder can be constructed using the factory function

ctc_decoder().

In addition to the previously mentioned components, it also takes in various beam

search decoding parameters and token/word parameters.

This decoder can also be run without a language model by passing in None into the lm parameter.

LM_WEIGHT = 3.23

WORD_SCORE = -0.26

beam_search_decoder = ctc_decoder(

lexicon=files.lexicon,

tokens=files.tokens,

lm=files.lm,

nbest=3,

beam_size=1500,

lm_weight=LM_WEIGHT,

word_score=WORD_SCORE,

)

Greedy Decoder

class GreedyCTCDecoder(torch.nn.Module):

def __init__(self, labels, blank=0):

super().__init__()

self.labels = labels

self.blank = blank

def forward(self, emission: torch.Tensor) -> List[str]:

"""Given a sequence emission over labels, get the best path

Args:

emission (Tensor): Logit tensors. Shape `[num_seq, num_label]`.

Returns:

List[str]: The resulting transcript

"""

indices = torch.argmax(emission, dim=-1) # [num_seq,]

indices = torch.unique_consecutive(indices, dim=-1)

indices = [i for i in indices if i != self.blank]

joined = "".join([self.labels[i] for i in indices])

return joined.replace("|", " ").strip().split()

greedy_decoder = GreedyCTCDecoder(tokens)

Run Inference

Now that we have the data, acoustic model, and decoder, we can perform

inference. The output of the beam search decoder is of type

CTCHypothesis, consisting of the

predicted token IDs, corresponding words (if a lexicon is provided), hypothesis score,

and timesteps corresponding to the token IDs. Recall the transcript corresponding to the

waveform is

actual_transcript = "i really was very much afraid of showing him how much shocked i was at some parts of what he said"

actual_transcript = actual_transcript.split()

emission, _ = acoustic_model(waveform)

The greedy decoder gives the following result.

greedy_result = greedy_decoder(emission[0])

greedy_transcript = " ".join(greedy_result)

greedy_wer = torchaudio.functional.edit_distance(actual_transcript, greedy_result) / len(actual_transcript)

print(f"Transcript: {greedy_transcript}")

print(f"WER: {greedy_wer}")

Transcript: i reily was very much affrayd of showing him howmuch shoktd i wause at some parte of what he seid

WER: 0.38095238095238093

Using the beam search decoder:

beam_search_result = beam_search_decoder(emission)

beam_search_transcript = " ".join(beam_search_result[0][0].words).strip()

beam_search_wer = torchaudio.functional.edit_distance(actual_transcript, beam_search_result[0][0].words) / len(

actual_transcript

)

print(f"Transcript: {beam_search_transcript}")

print(f"WER: {beam_search_wer}")

Transcript: i really was very much afraid of showing him how much shocked i was at some part of what he said

WER: 0.047619047619047616

Note

The words

field of the output hypotheses will be empty if no lexicon

is provided to the decoder. To retrieve a transcript with lexicon-free

decoding, you can perform the following to retrieve the token indices,

convert them to original tokens, then join them together.

tokens_str = "".join(beam_search_decoder.idxs_to_tokens(beam_search_result[0][0].tokens))

transcript = " ".join(tokens_str.split("|"))

We see that the transcript with the lexicon-constrained beam search decoder produces a more accurate result consisting of real words, while the greedy decoder can predict incorrectly spelled words like “affrayd” and “shoktd”.

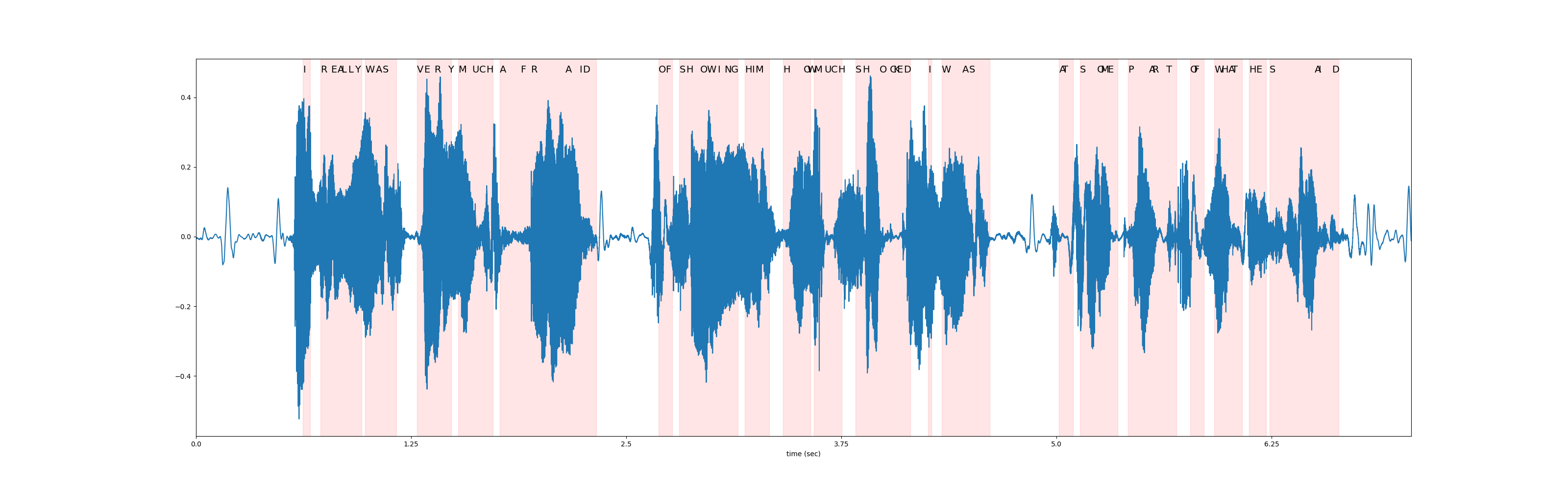

Timestep Alignments

Recall that one of the components of the resulting Hypotheses is timesteps corresponding to the token IDs.

timesteps = beam_search_result[0][0].timesteps

predicted_tokens = beam_search_decoder.idxs_to_tokens(beam_search_result[0][0].tokens)

print(predicted_tokens, len(predicted_tokens))

print(timesteps, timesteps.shape[0])

['|', 'i', '|', 'r', 'e', 'a', 'l', 'l', 'y', '|', 'w', 'a', 's', '|', 'v', 'e', 'r', 'y', '|', 'm', 'u', 'c', 'h', '|', 'a', 'f', 'r', 'a', 'i', 'd', '|', 'o', 'f', '|', 's', 'h', 'o', 'w', 'i', 'n', 'g', '|', 'h', 'i', 'm', '|', 'h', 'o', 'w', '|', 'm', 'u', 'c', 'h', '|', 's', 'h', 'o', 'c', 'k', 'e', 'd', '|', 'i', '|', 'w', 'a', 's', '|', 'a', 't', '|', 's', 'o', 'm', 'e', '|', 'p', 'a', 'r', 't', '|', 'o', 'f', '|', 'w', 'h', 'a', 't', '|', 'h', 'e', '|', 's', 'a', 'i', 'd', '|', '|'] 99

tensor([ 0, 31, 33, 36, 39, 41, 42, 44, 46, 48, 49, 52, 54, 58,

64, 66, 69, 73, 74, 76, 80, 82, 84, 86, 88, 94, 97, 107,

111, 112, 116, 134, 136, 138, 140, 142, 146, 148, 151, 153, 155, 157,

159, 161, 162, 166, 170, 176, 177, 178, 179, 182, 184, 186, 187, 191,

193, 198, 201, 202, 203, 205, 207, 212, 213, 216, 222, 224, 230, 250,

251, 254, 256, 261, 262, 264, 267, 270, 276, 277, 281, 284, 288, 289,

292, 295, 297, 299, 300, 303, 305, 307, 310, 311, 324, 325, 329, 331,

353], dtype=torch.int32) 99

Below, we visualize the token timestep alignments relative to the original waveform.

def plot_alignments(waveform, emission, tokens, timesteps):

fig, ax = plt.subplots(figsize=(32, 10))

ax.plot(waveform)

ratio = waveform.shape[0] / emission.shape[1]

word_start = 0

for i in range(len(tokens)):

if i != 0 and tokens[i - 1] == "|":

word_start = timesteps[i]

if tokens[i] != "|":

plt.annotate(tokens[i].upper(), (timesteps[i] * ratio, waveform.max() * 1.02), size=14)

elif i != 0:

word_end = timesteps[i]

ax.axvspan(word_start * ratio, word_end * ratio, alpha=0.1, color="red")

xticks = ax.get_xticks()

plt.xticks(xticks, xticks / bundle.sample_rate)

ax.set_xlabel("time (sec)")

ax.set_xlim(0, waveform.shape[0])

plot_alignments(waveform[0], emission, predicted_tokens, timesteps)

Beam Search Decoder Parameters

In this section, we go a little bit more in depth about some different

parameters and tradeoffs. For the full list of customizable parameters,

please refer to the

documentation.

Helper Function

def print_decoded(decoder, emission, param, param_value):

start_time = time.monotonic()

result = decoder(emission)

decode_time = time.monotonic() - start_time

transcript = " ".join(result[0][0].words).lower().strip()

score = result[0][0].score

print(f"{param} {param_value:<3}: {transcript} (score: {score:.2f}; {decode_time:.4f} secs)")

nbest

This parameter indicates the number of best hypotheses to return, which

is a property that is not possible with the greedy decoder. For

instance, by setting nbest=3 when constructing the beam search

decoder earlier, we can now access the hypotheses with the top 3 scores.

for i in range(3):

transcript = " ".join(beam_search_result[0][i].words).strip()

score = beam_search_result[0][i].score

print(f"{transcript} (score: {score})")

i really was very much afraid of showing him how much shocked i was at some part of what he said (score: 3699.8241478490795)

i really was very much afraid of showing him how much shocked i was at some parts of what he said (score: 3697.8584095108477)

i reply was very much afraid of showing him how much shocked i was at some part of what he said (score: 3695.01579982042)

beam size

The beam_size parameter determines the maximum number of best

hypotheses to hold after each decoding step. Using larger beam sizes

allows for exploring a larger range of possible hypotheses which can

produce hypotheses with higher scores, but it is computationally more

expensive and does not provide additional gains beyond a certain point.

In the example below, we see improvement in decoding quality as we increase beam size from 1 to 5 to 50, but notice how using a beam size of 500 provides the same output as beam size 50 while increase the computation time.

beam_sizes = [1, 5, 50, 500]

for beam_size in beam_sizes:

beam_search_decoder = ctc_decoder(

lexicon=files.lexicon,

tokens=files.tokens,

lm=files.lm,

beam_size=beam_size,

lm_weight=LM_WEIGHT,

word_score=WORD_SCORE,

)

print_decoded(beam_search_decoder, emission, "beam size", beam_size)

beam size 1 : i you ery much afra of shongut shot i was at some arte what he sad (score: 3144.93; 0.1499 secs)

beam size 5 : i rely was very much afraid of showing him how much shot i was at some parts of what he said (score: 3688.02; 0.0639 secs)

beam size 50 : i really was very much afraid of showing him how much shocked i was at some part of what he said (score: 3699.82; 0.2851 secs)

beam size 500: i really was very much afraid of showing him how much shocked i was at some part of what he said (score: 3699.82; 0.6475 secs)

beam size token

The beam_size_token parameter corresponds to the number of tokens to

consider for expanding each hypothesis at the decoding step. Exploring a

larger number of next possible tokens increases the range of potential

hypotheses at the cost of computation.

num_tokens = len(tokens)

beam_size_tokens = [1, 5, 10, num_tokens]

for beam_size_token in beam_size_tokens:

beam_search_decoder = ctc_decoder(

lexicon=files.lexicon,

tokens=files.tokens,

lm=files.lm,

beam_size_token=beam_size_token,

lm_weight=LM_WEIGHT,

word_score=WORD_SCORE,

)

print_decoded(beam_search_decoder, emission, "beam size token", beam_size_token)

beam size token 1 : i rely was very much affray of showing him hoch shot i was at some part of what he sed (score: 3584.80; 0.1968 secs)

beam size token 5 : i rely was very much afraid of showing him how much shocked i was at some part of what he said (score: 3694.83; 0.1798 secs)

beam size token 10 : i really was very much afraid of showing him how much shocked i was at some part of what he said (score: 3696.25; 0.2249 secs)

beam size token 29 : i really was very much afraid of showing him how much shocked i was at some part of what he said (score: 3699.82; 0.2533 secs)

beam threshold

The beam_threshold parameter is used to prune the stored hypotheses

set at each decoding step, removing hypotheses whose scores are greater

than beam_threshold away from the highest scoring hypothesis. There

is a balance between choosing smaller thresholds to prune more

hypotheses and reduce the search space, and choosing a large enough

threshold such that plausible hypotheses are not pruned.

beam_thresholds = [1, 5, 10, 25]

for beam_threshold in beam_thresholds:

beam_search_decoder = ctc_decoder(

lexicon=files.lexicon,

tokens=files.tokens,

lm=files.lm,

beam_threshold=beam_threshold,

lm_weight=LM_WEIGHT,

word_score=WORD_SCORE,

)

print_decoded(beam_search_decoder, emission, "beam threshold", beam_threshold)

beam threshold 1 : i ila ery much afraid of shongut shot i was at some parts of what he said (score: 3316.20; 0.0563 secs)

beam threshold 5 : i rely was very much afraid of showing him how much shot i was at some parts of what he said (score: 3682.23; 0.0635 secs)

beam threshold 10 : i really was very much afraid of showing him how much shocked i was at some part of what he said (score: 3699.82; 0.2344 secs)

beam threshold 25 : i really was very much afraid of showing him how much shocked i was at some part of what he said (score: 3699.82; 0.2361 secs)

language model weight

The lm_weight parameter is the weight to assign to the language

model score which to accumulate with the acoustic model score for

determining the overall scores. Larger weights encourage the model to

predict next words based on the language model, while smaller weights

give more weight to the acoustic model score instead.

lm_weights = [0, LM_WEIGHT, 15]

for lm_weight in lm_weights:

beam_search_decoder = ctc_decoder(

lexicon=files.lexicon,

tokens=files.tokens,

lm=files.lm,

lm_weight=lm_weight,

word_score=WORD_SCORE,

)

print_decoded(beam_search_decoder, emission, "lm weight", lm_weight)

lm weight 0 : i rely was very much affraid of showing him ho much shoke i was at some parte of what he seid (score: 3834.05; 0.2888 secs)

lm weight 3.23: i really was very much afraid of showing him how much shocked i was at some part of what he said (score: 3699.82; 0.3015 secs)

lm weight 15 : was there in his was at some of what he said (score: 2918.99; 0.2994 secs)

additional parameters

Additional parameters that can be optimized include the following

word_score: score to add when word finishesunk_score: unknown word appearance score to addsil_score: silence appearance score to addlog_add: whether to use log add for lexicon Trie smearing

Total running time of the script: ( 2 minutes 11.989 seconds)