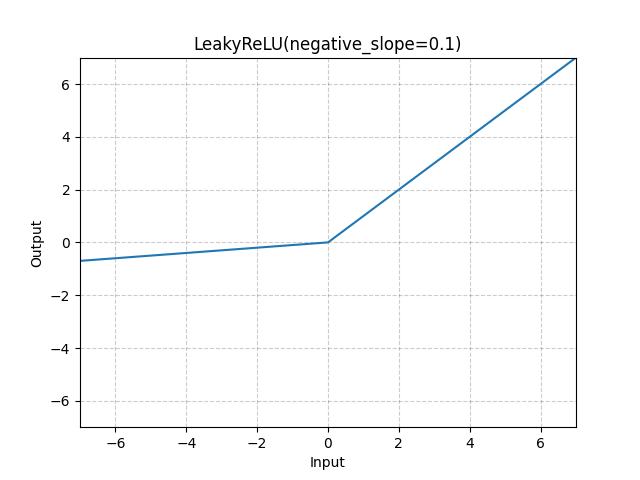

LeakyReLU

- class torch.nn.LeakyReLU(negative_slope=0.01, inplace=False)[source][source]

Applies the LeakyReLU function element-wise.

or

- Parameters

- Shape:

Input: where * means, any number of additional dimensions

Output: , same shape as the input

Examples:

>>> m = nn.LeakyReLU(0.1) >>> input = torch.randn(2) >>> output = m(input)