ExecuTorch Llama iOS Demo App

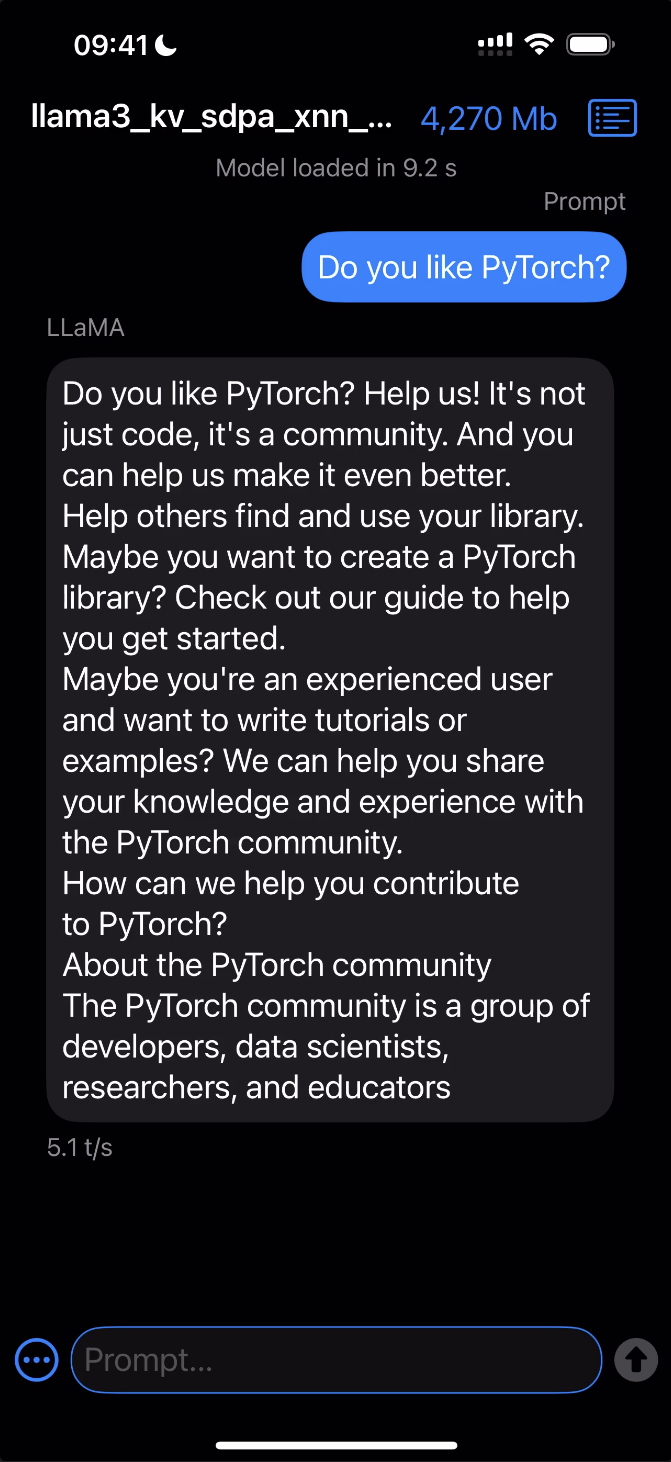

Get hands-on with running LLaMA and LLaVA models — exported via ExecuTorch — natively on your iOS device!

Click the image below to see it in action!

Requirements

Xcode 15.0 or later

Cmake 3.19 or later

Download and open the macOS

.dmginstaller and move the Cmake app to/Applicationsfolder.Install Cmake command line tools:

sudo /Applications/CMake.app/Contents/bin/cmake-gui --install

A development provisioning profile with the

increased-memory-limitentitlement.

Models

Download already exported LLaMA/LLaVA models along with tokenizers from HuggingFace or export your own empowered by XNNPACK or MPS backends.

Build and Run

Make sure git submodules are up-to-date:

git submodule update --init --recursive

Open the Xcode project:

open examples/demo-apps/apple_ios/LLaMA/LLaMA.xcodeprojClick the Play button to launch the app in the Simulator.

To run on a device, ensure you have it set up for development and a provisioning profile with the

increased-memory-limitentitlement. Update the app’s bundle identifier to match your provisioning profile with the required capability.After successfully launching the app, copy the exported ExecuTorch model (

.pte) and tokenizer (.model) files to the iLLaMA folder.For the Simulator: Drag and drop both files onto the Simulator window and save them in the

On My iPhone > iLLaMAfolder.For a Device: Open a separate Finder window, navigate to the Files tab, drag and drop both files into the iLLaMA folder, and wait for the copying to finish.

Follow the app’s UI guidelines to select the model and tokenizer files from the local filesystem and issue a prompt.

For more details check out the Using ExecuTorch on iOS page.