Wav2Vec2FABundle

- class torchaudio.pipelines.Wav2Vec2FABundle[source]

Data class that bundles associated information to use pretrained

Wav2Vec2Modelfor forced alignment.This class provides interfaces for instantiating the pretrained model along with the information necessary to retrieve pretrained weights and additional data to be used with the model.

Torchaudio library instantiates objects of this class, each of which represents a different pretrained model. Client code should access pretrained models via these instances.

Please see below for the usage and the available values.

- Example - Feature Extraction

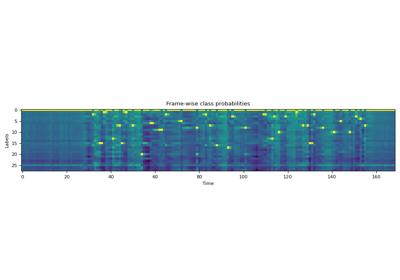

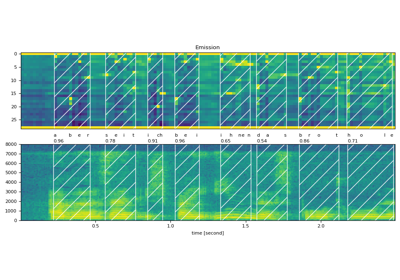

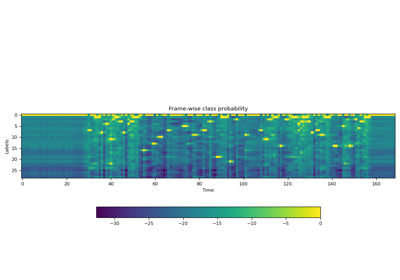

>>> import torchaudio >>> >>> bundle = torchaudio.pipelines.MMS_FA >>> >>> # Build the model and load pretrained weight. >>> model = bundle.get_model() Downloading: 100%|███████████████████████████████| 1.18G/1.18G [00:05<00:00, 216MB/s] >>> >>> # Resample audio to the expected sampling rate >>> waveform = torchaudio.functional.resample(waveform, sample_rate, bundle.sample_rate) >>> >>> # Estimate the probability of token distribution >>> emission, _ = model(waveform) >>> >>> # Generate frame-wise alignment >>> alignment, scores = torchaudio.functional.forced_align( >>> emission, targets, input_lengths, target_lengths, blank=0) >>>

- Tutorials using

Wav2Vec2FABundle:

Properties

sample_rate

Methods

get_aligner

get_dict

- Wav2Vec2FABundle.get_dict(star: Optional[str] = '*', blank: str = '-') Dict[str, int][source]

Get the mapping from token to index (in emission feature dim)

- Parameters:

- Returns:

For models fine-tuned on ASR, returns the tuple of strings representing the output class labels.

- Return type:

Tuple[str, …]

- Example

>>> from torchaudio.pipelines import MMS_FA as bundle >>> bundle.get_dict() {'-': 0, 'a': 1, 'i': 2, 'e': 3, 'n': 4, 'o': 5, 'u': 6, 't': 7, 's': 8, 'r': 9, 'm': 10, 'k': 11, 'l': 12, 'd': 13, 'g': 14, 'h': 15, 'y': 16, 'b': 17, 'p': 18, 'w': 19, 'c': 20, 'v': 21, 'j': 22, 'z': 23, 'f': 24, "'": 25, 'q': 26, 'x': 27, '*': 28} >>> bundle.get_dict(star=None) {'-': 0, 'a': 1, 'i': 2, 'e': 3, 'n': 4, 'o': 5, 'u': 6, 't': 7, 's': 8, 'r': 9, 'm': 10, 'k': 11, 'l': 12, 'd': 13, 'g': 14, 'h': 15, 'y': 16, 'b': 17, 'p': 18, 'w': 19, 'c': 20, 'v': 21, 'j': 22, 'z': 23, 'f': 24, "'": 25, 'q': 26, 'x': 27}

get_labels

- Wav2Vec2FABundle.get_labels(star: Optional[str] = '*', blank: str = '-') Tuple[str, ...][source]

Get the labels corresponding to the feature dimension of emission.

The first is blank token, and it is customizable.

- Parameters:

- Returns:

For models fine-tuned on ASR, returns the tuple of strings representing the output class labels.

- Return type:

Tuple[str, …]

- Example

>>> from torchaudio.pipelines import MMS_FA as bundle >>> bundle.get_labels() ('-', 'a', 'i', 'e', 'n', 'o', 'u', 't', 's', 'r', 'm', 'k', 'l', 'd', 'g', 'h', 'y', 'b', 'p', 'w', 'c', 'v', 'j', 'z', 'f', "'", 'q', 'x', '*') >>> bundle.get_labels(star=None) ('-', 'a', 'i', 'e', 'n', 'o', 'u', 't', 's', 'r', 'm', 'k', 'l', 'd', 'g', 'h', 'y', 'b', 'p', 'w', 'c', 'v', 'j', 'z', 'f', "'", 'q', 'x')

get_model

- Wav2Vec2FABundle.get_model(with_star: bool = True, *, dl_kwargs=None) Module[source]

Construct the model and load the pretrained weight.

The weight file is downloaded from the internet and cached with

torch.hub.load_state_dict_from_url()- Parameters:

with_star (bool, optional) – If enabled, the last dimension of output layer is extended by one, which corresponds to star token.

dl_kwargs (dictionary of keyword arguments) – Passed to

torch.hub.load_state_dict_from_url().

- Returns:

Variation of

Wav2Vec2Model.Note

The model created with this method returns probability in log-domain, (i.e.

torch.nn.functional.log_softmax()is applied), whereas the other Wav2Vec2 models returns logit.