Note

Click here to download the full example code

Text-to-Speech with Tacotron2

Author Yao-Yuan Yang, Moto Hira

import IPython

import matplotlib

import matplotlib.pyplot as plt

Overview

This tutorial shows how to build text-to-speech pipeline, using the pretrained Tacotron2 in torchaudio.

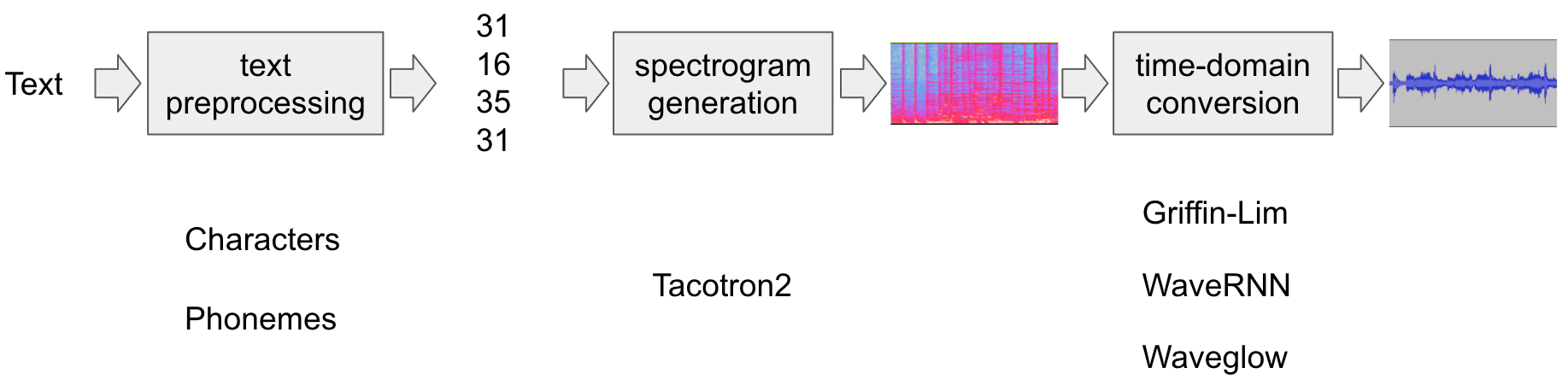

The text-to-speech pipeline goes as follows:

Text preprocessing

First, the input text is encoded into a list of symbols. In this tutorial, we will use English characters and phonemes as the symbols.

Spectrogram generation

From the encoded text, a spectrogram is generated. We use

Tacotron2model for this.Time-domain conversion

The last step is converting the spectrogram into the waveform. The process to generate speech from spectrogram is also called Vocoder. In this tutorial, three different vocoders are used, WaveRNN, Griffin-Lim, and Nvidia’s WaveGlow.

The following figure illustrates the whole process.

All the related components are bundled in torchaudio.pipelines.Tacotron2TTSBundle(),

but this tutorial will also cover the process under the hood.

Preparation

First, we install the necessary dependencies. In addition to

torchaudio, DeepPhonemizer is required to perform phoneme-based

encoding.

# When running this example in notebook, install DeepPhonemizer

# !pip3 install deep_phonemizer

import torch

import torchaudio

matplotlib.rcParams["figure.figsize"] = [16.0, 4.8]

torch.random.manual_seed(0)

device = "cuda" if torch.cuda.is_available() else "cpu"

print(torch.__version__)

print(torchaudio.__version__)

print(device)

Out:

1.12.0

0.12.0

cpu

Text Processing

Character-based encoding

In this section, we will go through how the character-based encoding works.

Since the pre-trained Tacotron2 model expects specific set of symbol

tables, the same functionalities available in torchaudio. This

section is more for the explanation of the basis of encoding.

Firstly, we define the set of symbols. For example, we can use

'_-!\'(),.:;? abcdefghijklmnopqrstuvwxyz'. Then, we will map the

each character of the input text into the index of the corresponding

symbol in the table.

The following is an example of such processing. In the example, symbols that are not in the table are ignored.

Out:

[19, 16, 23, 23, 26, 11, 34, 26, 29, 23, 15, 2, 11, 31, 16, 35, 31, 11, 31, 26, 11, 30, 27, 16, 16, 14, 19, 2]

As mentioned in the above, the symbol table and indices must match

what the pretrained Tacotron2 model expects. torchaudio provides the

transform along with the pretrained model. For example, you can

instantiate and use such transform as follow.

Out:

tensor([[19, 16, 23, 23, 26, 11, 34, 26, 29, 23, 15, 2, 11, 31, 16, 35, 31, 11,

31, 26, 11, 30, 27, 16, 16, 14, 19, 2]])

tensor([28], dtype=torch.int32)

The processor object takes either a text or list of texts as inputs.

When a list of texts are provided, the returned lengths variable

represents the valid length of each processed tokens in the output

batch.

The intermediate representation can be retrieved as follow.

Out:

['h', 'e', 'l', 'l', 'o', ' ', 'w', 'o', 'r', 'l', 'd', '!', ' ', 't', 'e', 'x', 't', ' ', 't', 'o', ' ', 's', 'p', 'e', 'e', 'c', 'h', '!']

Phoneme-based encoding

Phoneme-based encoding is similar to character-based encoding, but it uses a symbol table based on phonemes and a G2P (Grapheme-to-Phoneme) model.

The detail of the G2P model is out of scope of this tutorial, we will just look at what the conversion looks like.

Similar to the case of character-based encoding, the encoding process is

expected to match what a pretrained Tacotron2 model is trained on.

torchaudio has an interface to create the process.

The following code illustrates how to make and use the process. Behind

the scene, a G2P model is created using DeepPhonemizer package, and

the pretrained weights published by the author of DeepPhonemizer is

fetched.

Out:

0%| | 0.00/63.6M [00:00<?, ?B/s]

0%| | 56.0k/63.6M [00:00<03:29, 318kB/s]

0%| | 192k/63.6M [00:00<01:53, 584kB/s]

1%|1 | 872k/63.6M [00:00<00:31, 2.07MB/s]

5%|5 | 3.21M/63.6M [00:00<00:09, 6.65MB/s]

9%|8 | 5.73M/63.6M [00:00<00:06, 9.50MB/s]

14%|#3 | 8.73M/63.6M [00:01<00:04, 12.2MB/s]

18%|#8 | 11.7M/63.6M [00:01<00:03, 13.9MB/s]

23%|##3 | 14.7M/63.6M [00:01<00:03, 15.0MB/s]

28%|##7 | 17.7M/63.6M [00:01<00:03, 15.7MB/s]

33%|###2 | 20.7M/63.6M [00:01<00:02, 16.2MB/s]

37%|###7 | 23.7M/63.6M [00:01<00:02, 16.5MB/s]

42%|####1 | 26.7M/63.6M [00:02<00:02, 16.8MB/s]

47%|####6 | 29.7M/63.6M [00:02<00:02, 17.0MB/s]

51%|#####1 | 32.6M/63.6M [00:02<00:01, 19.5MB/s]

54%|#####4 | 34.6M/63.6M [00:02<00:01, 17.0MB/s]

58%|#####8 | 37.2M/63.6M [00:02<00:01, 16.5MB/s]

63%|######3 | 40.2M/63.6M [00:02<00:01, 16.8MB/s]

68%|######7 | 43.2M/63.6M [00:03<00:01, 17.0MB/s]

72%|#######1 | 45.7M/63.6M [00:03<00:00, 19.0MB/s]

75%|#######4 | 47.7M/63.6M [00:03<00:01, 16.5MB/s]

80%|#######9 | 50.7M/63.6M [00:03<00:00, 16.8MB/s]

84%|########4 | 53.7M/63.6M [00:03<00:00, 16.9MB/s]

89%|########9 | 56.7M/63.6M [00:03<00:00, 17.2MB/s]

92%|#########2| 58.8M/63.6M [00:04<00:00, 18.1MB/s]

96%|#########6| 61.2M/63.6M [00:04<00:00, 16.9MB/s]

100%|##########| 63.6M/63.6M [00:04<00:00, 15.3MB/s]

tensor([[54, 20, 65, 69, 11, 92, 44, 65, 38, 2, 11, 81, 40, 64, 79, 81, 11, 81,

20, 11, 79, 77, 59, 37, 2]])

tensor([25], dtype=torch.int32)

Notice that the encoded values are different from the example of character-based encoding.

The intermediate representation looks like the following.

Out:

['HH', 'AH', 'L', 'OW', ' ', 'W', 'ER', 'L', 'D', '!', ' ', 'T', 'EH', 'K', 'S', 'T', ' ', 'T', 'AH', ' ', 'S', 'P', 'IY', 'CH', '!']

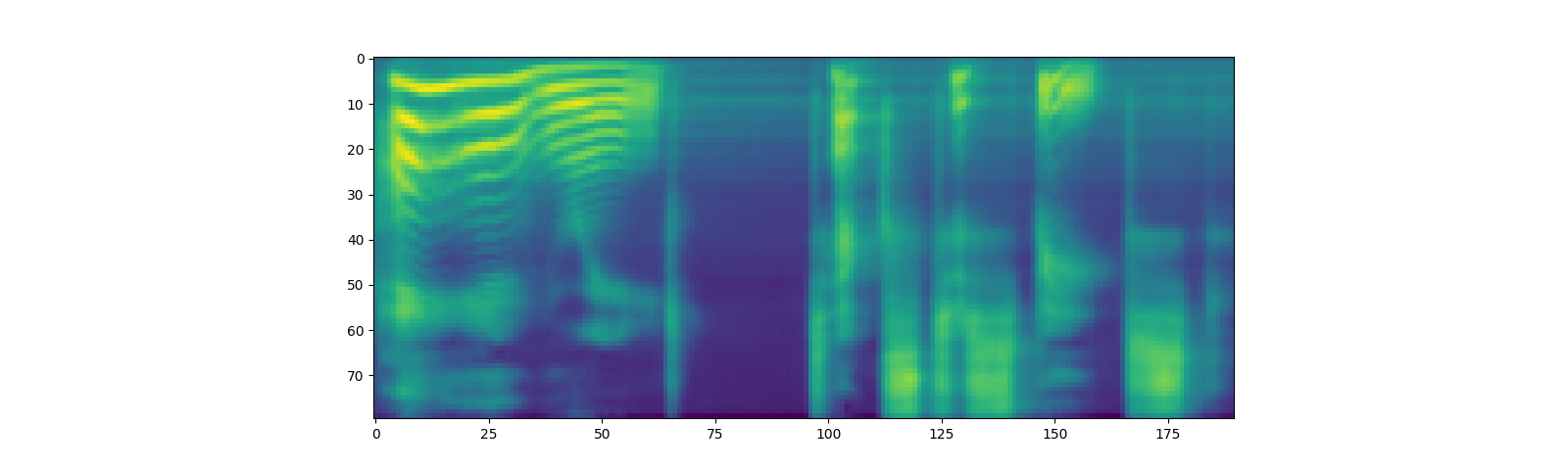

Spectrogram Generation

Tacotron2 is the model we use to generate spectrogram from the

encoded text. For the detail of the model, please refer to the

paper.

It is easy to instantiate a Tacotron2 model with pretrained weight, however, note that the input to Tacotron2 models need to be processed by the matching text processor.

torchaudio.pipelines.Tacotron2TTSBundle() bundles the matching

models and processors together so that it is easy to create the pipeline.

For the available bundles, and its usage, please refer to torchaudio.pipelines.

bundle = torchaudio.pipelines.TACOTRON2_WAVERNN_PHONE_LJSPEECH

processor = bundle.get_text_processor()

tacotron2 = bundle.get_tacotron2().to(device)

text = "Hello world! Text to speech!"

with torch.inference_mode():

processed, lengths = processor(text)

processed = processed.to(device)

lengths = lengths.to(device)

spec, _, _ = tacotron2.infer(processed, lengths)

plt.imshow(spec[0].cpu().detach())

Out:

Downloading: "https://download.pytorch.org/torchaudio/models/tacotron2_english_phonemes_1500_epochs_wavernn_ljspeech.pth" to /root/.cache/torch/hub/checkpoints/tacotron2_english_phonemes_1500_epochs_wavernn_ljspeech.pth

0%| | 0.00/107M [00:00<?, ?B/s]

4%|4 | 4.40M/107M [00:00<00:02, 46.1MB/s]

8%|8 | 8.80M/107M [00:00<00:02, 45.3MB/s]

14%|#3 | 15.0M/107M [00:00<00:01, 54.2MB/s]

20%|## | 21.7M/107M [00:00<00:01, 60.1MB/s]

26%|##5 | 27.5M/107M [00:00<00:01, 47.8MB/s]

32%|###1 | 34.0M/107M [00:00<00:01, 53.8MB/s]

38%|###8 | 41.2M/107M [00:00<00:01, 60.1MB/s]

45%|####5 | 48.6M/107M [00:00<00:00, 65.1MB/s]

52%|#####2 | 56.0M/107M [00:00<00:00, 68.9MB/s]

58%|#####8 | 62.8M/107M [00:01<00:00, 69.4MB/s]

65%|######4 | 69.7M/107M [00:01<00:00, 70.3MB/s]

71%|#######1 | 76.5M/107M [00:01<00:00, 67.5MB/s]

77%|#######7 | 83.0M/107M [00:01<00:00, 62.2MB/s]

83%|########2 | 89.2M/107M [00:01<00:00, 59.3MB/s]

89%|########8 | 95.4M/107M [00:01<00:00, 60.8MB/s]

94%|#########4| 101M/107M [00:01<00:00, 60.5MB/s]

100%|#########9| 107M/107M [00:01<00:00, 59.8MB/s]

100%|##########| 107M/107M [00:01<00:00, 60.6MB/s]

<matplotlib.image.AxesImage object at 0x7fd8fd4d97f0>

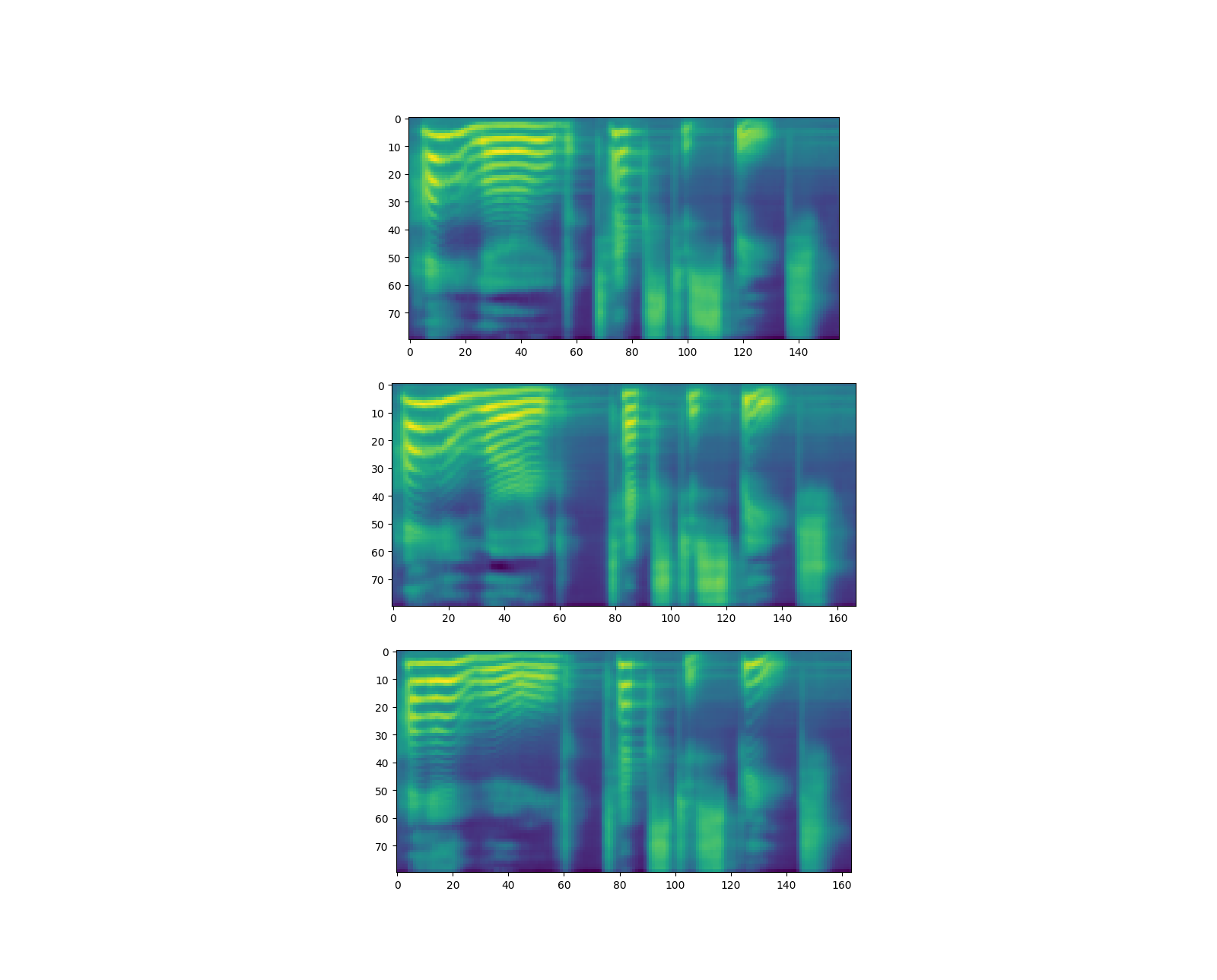

Note that Tacotron2.infer method perfoms multinomial sampling,

therefor, the process of generating the spectrogram incurs randomness.

Out:

torch.Size([80, 155])

torch.Size([80, 167])

torch.Size([80, 164])

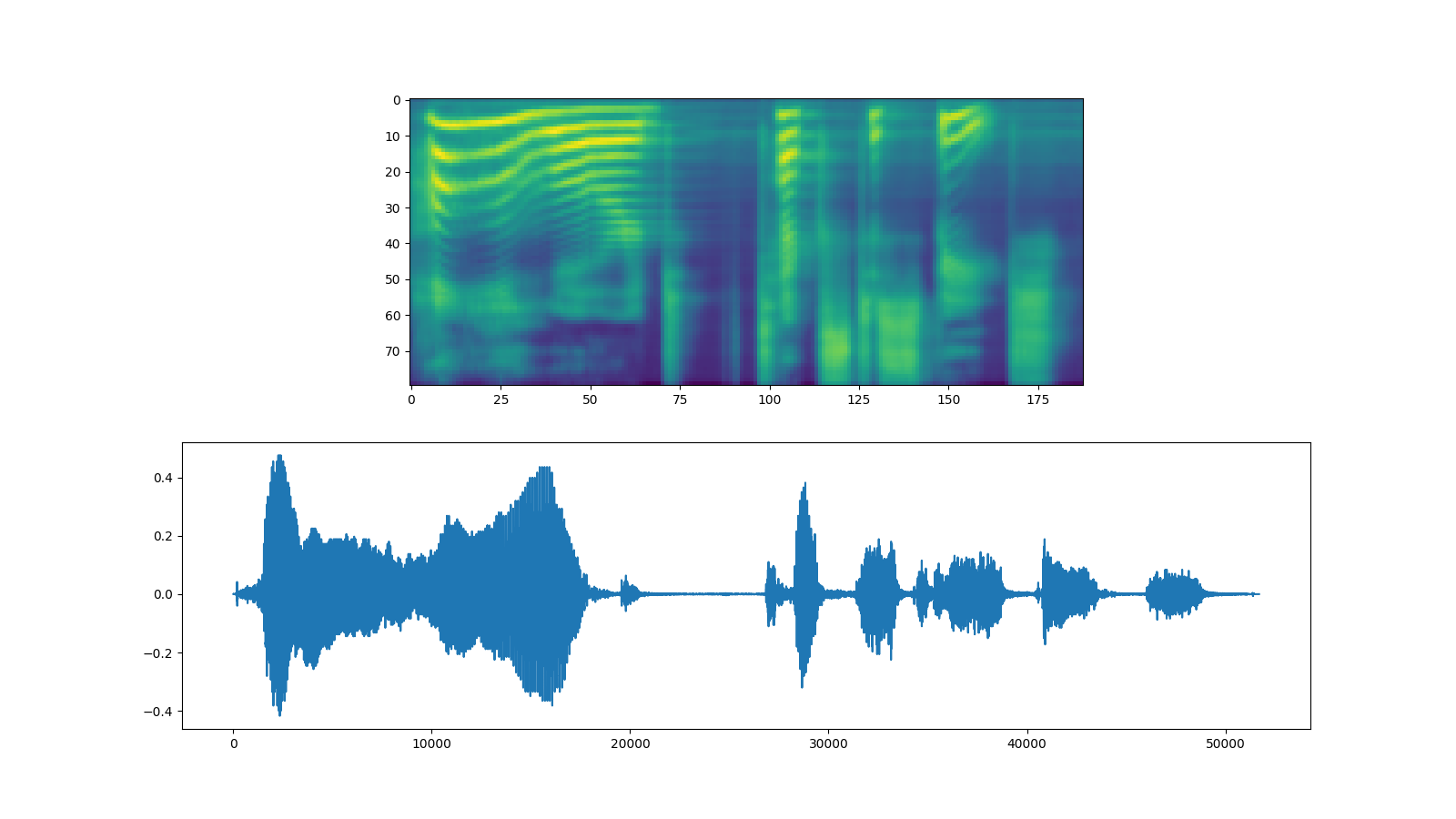

Waveform Generation

Once the spectrogram is generated, the last process is to recover the waveform from the spectrogram.

torchaudio provides vocoders based on GriffinLim and

WaveRNN.

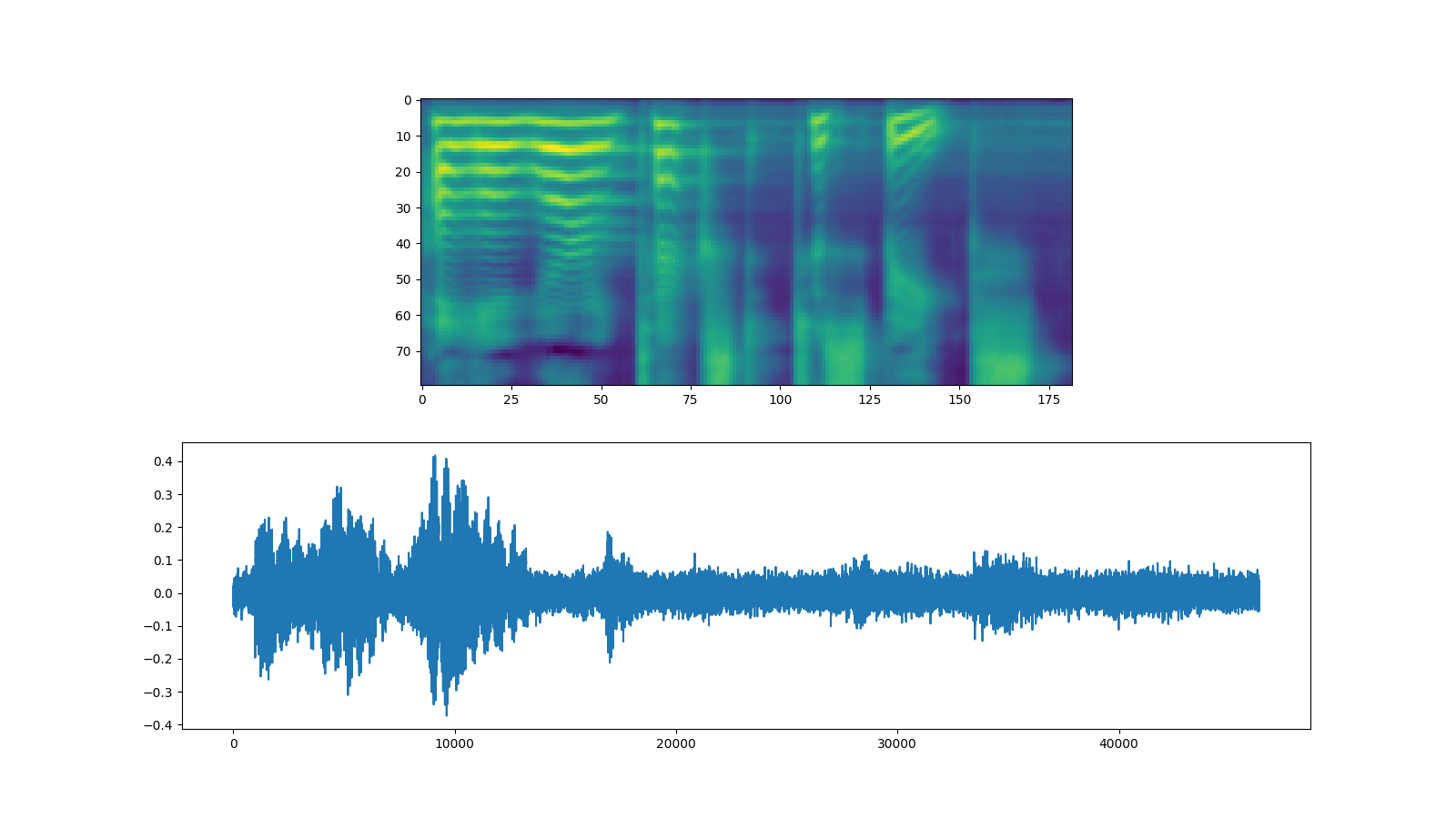

WaveRNN

Continuing from the previous section, we can instantiate the matching WaveRNN model from the same bundle.

bundle = torchaudio.pipelines.TACOTRON2_WAVERNN_PHONE_LJSPEECH

processor = bundle.get_text_processor()

tacotron2 = bundle.get_tacotron2().to(device)

vocoder = bundle.get_vocoder().to(device)

text = "Hello world! Text to speech!"

with torch.inference_mode():

processed, lengths = processor(text)

processed = processed.to(device)

lengths = lengths.to(device)

spec, spec_lengths, _ = tacotron2.infer(processed, lengths)

waveforms, lengths = vocoder(spec, spec_lengths)

fig, [ax1, ax2] = plt.subplots(2, 1, figsize=(16, 9))

ax1.imshow(spec[0].cpu().detach())

ax2.plot(waveforms[0].cpu().detach())

torchaudio.save("_assets/output_wavernn.wav", waveforms[0:1].cpu(), sample_rate=vocoder.sample_rate)

IPython.display.Audio("_assets/output_wavernn.wav")

Out:

Downloading: "https://download.pytorch.org/torchaudio/models/wavernn_10k_epochs_8bits_ljspeech.pth" to /root/.cache/torch/hub/checkpoints/wavernn_10k_epochs_8bits_ljspeech.pth

0%| | 0.00/16.7M [00:00<?, ?B/s]

42%|####1 | 6.98M/16.7M [00:00<00:00, 73.2MB/s]

84%|########3 | 14.0M/16.7M [00:00<00:00, 72.6MB/s]

100%|##########| 16.7M/16.7M [00:00<00:00, 69.3MB/s]

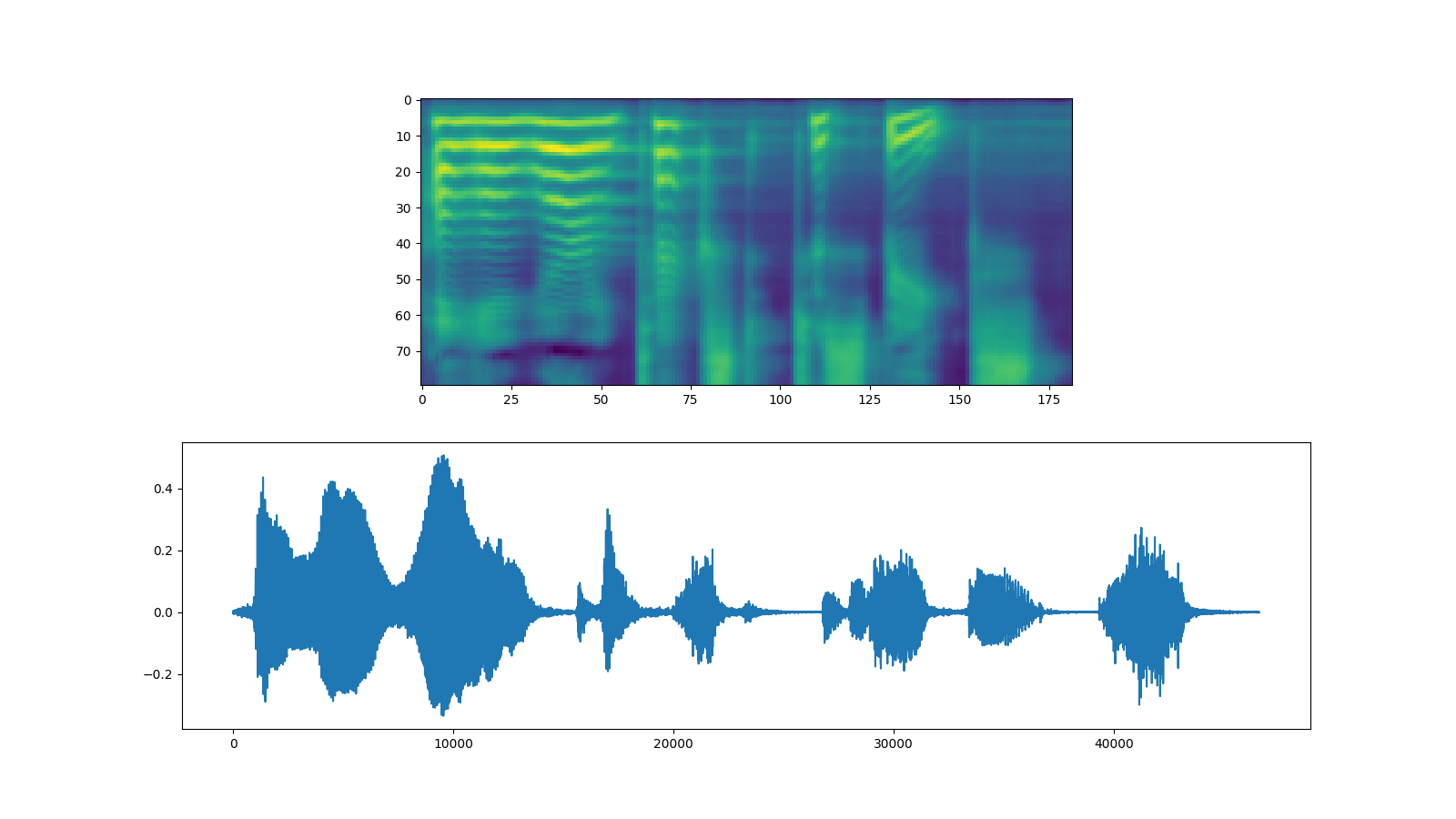

Griffin-Lim

Using the Griffin-Lim vocoder is same as WaveRNN. You can instantiate

the vocode object with get_vocoder method and pass the spectrogram.

bundle = torchaudio.pipelines.TACOTRON2_GRIFFINLIM_PHONE_LJSPEECH

processor = bundle.get_text_processor()

tacotron2 = bundle.get_tacotron2().to(device)

vocoder = bundle.get_vocoder().to(device)

with torch.inference_mode():

processed, lengths = processor(text)

processed = processed.to(device)

lengths = lengths.to(device)

spec, spec_lengths, _ = tacotron2.infer(processed, lengths)

waveforms, lengths = vocoder(spec, spec_lengths)

fig, [ax1, ax2] = plt.subplots(2, 1, figsize=(16, 9))

ax1.imshow(spec[0].cpu().detach())

ax2.plot(waveforms[0].cpu().detach())

torchaudio.save(

"_assets/output_griffinlim.wav",

waveforms[0:1].cpu(),

sample_rate=vocoder.sample_rate,

)

IPython.display.Audio("_assets/output_griffinlim.wav")

Out:

Downloading: "https://download.pytorch.org/torchaudio/models/tacotron2_english_phonemes_1500_epochs_ljspeech.pth" to /root/.cache/torch/hub/checkpoints/tacotron2_english_phonemes_1500_epochs_ljspeech.pth

0%| | 0.00/107M [00:00<?, ?B/s]

3%|3 | 3.72M/107M [00:00<00:03, 34.4MB/s]

9%|8 | 9.48M/107M [00:00<00:02, 48.9MB/s]

15%|#4 | 16.0M/107M [00:00<00:01, 51.5MB/s]

27%|##6 | 28.6M/107M [00:00<00:01, 80.6MB/s]

42%|####1 | 45.0M/107M [00:00<00:00, 111MB/s]

52%|#####1 | 55.9M/107M [00:00<00:00, 60.0MB/s]

60%|#####9 | 64.0M/107M [00:01<00:00, 56.0MB/s]

74%|#######4 | 80.0M/107M [00:01<00:00, 68.5MB/s]

82%|########1 | 87.7M/107M [00:01<00:00, 52.3MB/s]

89%|########9 | 96.0M/107M [00:01<00:00, 56.7MB/s]

95%|#########5| 102M/107M [00:01<00:00, 51.2MB/s]

100%|##########| 107M/107M [00:02<00:00, 54.3MB/s]

Waveglow

Waveglow is a vocoder published by Nvidia. The pretrained weight is

publishe on Torch Hub. One can instantiate the model using torch.hub

module.

# Workaround to load model mapped on GPU

# https://stackoverflow.com/a/61840832

waveglow = torch.hub.load(

"NVIDIA/DeepLearningExamples:torchhub",

"nvidia_waveglow",

model_math="fp32",

pretrained=False,

)

checkpoint = torch.hub.load_state_dict_from_url(

"https://api.ngc.nvidia.com/v2/models/nvidia/waveglowpyt_fp32/versions/1/files/nvidia_waveglowpyt_fp32_20190306.pth", # noqa: E501

progress=False,

map_location=device,

)

state_dict = {key.replace("module.", ""): value for key, value in checkpoint["state_dict"].items()}

waveglow.load_state_dict(state_dict)

waveglow = waveglow.remove_weightnorm(waveglow)

waveglow = waveglow.to(device)

waveglow.eval()

with torch.no_grad():

waveforms = waveglow.infer(spec)

fig, [ax1, ax2] = plt.subplots(2, 1, figsize=(16, 9))

ax1.imshow(spec[0].cpu().detach())

ax2.plot(waveforms[0].cpu().detach())

torchaudio.save("_assets/output_waveglow.wav", waveforms[0:1].cpu(), sample_rate=22050)

IPython.display.Audio("_assets/output_waveglow.wav")

Out:

/usr/local/envs/python3.8/lib/python3.8/site-packages/torch/hub.py:266: UserWarning: You are about to download and run code from an untrusted repository. In a future release, this won't be allowed. To add the repository to your trusted list, change the command to {calling_fn}(..., trust_repo=False) and a command prompt will appear asking for an explicit confirmation of trust, or load(..., trust_repo=True), which will assume that the prompt is to be answered with 'yes'. You can also use load(..., trust_repo='check') which will only prompt for confirmation if the repo is not already trusted. This will eventually be the default behaviour

warnings.warn(

Downloading: "https://github.com/NVIDIA/DeepLearningExamples/zipball/torchhub" to /root/.cache/torch/hub/torchhub.zip

/root/.cache/torch/hub/NVIDIA_DeepLearningExamples_torchhub/PyTorch/Classification/ConvNets/image_classification/models/common.py:13: UserWarning: pytorch_quantization module not found, quantization will not be available

warnings.warn(

/root/.cache/torch/hub/NVIDIA_DeepLearningExamples_torchhub/PyTorch/Classification/ConvNets/image_classification/models/efficientnet.py:17: UserWarning: pytorch_quantization module not found, quantization will not be available

warnings.warn(

Downloading: "https://api.ngc.nvidia.com/v2/models/nvidia/waveglowpyt_fp32/versions/1/files/nvidia_waveglowpyt_fp32_20190306.pth" to /root/.cache/torch/hub/checkpoints/nvidia_waveglowpyt_fp32_20190306.pth

Total running time of the script: ( 5 minutes 32.190 seconds)