SE-ResNeXt101

Model Description

The SE-ResNeXt101-32x4d is a ResNeXt101-32x4d model with added Squeeze-and-Excitation module introduced in the Squeeze-and-Excitation Networks paper.

This model is trained with mixed precision using Tensor Cores on Volta, Turing, and the NVIDIA Ampere GPU architectures. Therefore, researchers can get results 3x faster than training without Tensor Cores, while experiencing the benefits of mixed precision training. This model is tested against each NGC monthly container release to ensure consistent accuracy and performance over time.

We use NHWC data layout when training using Mixed Precision.

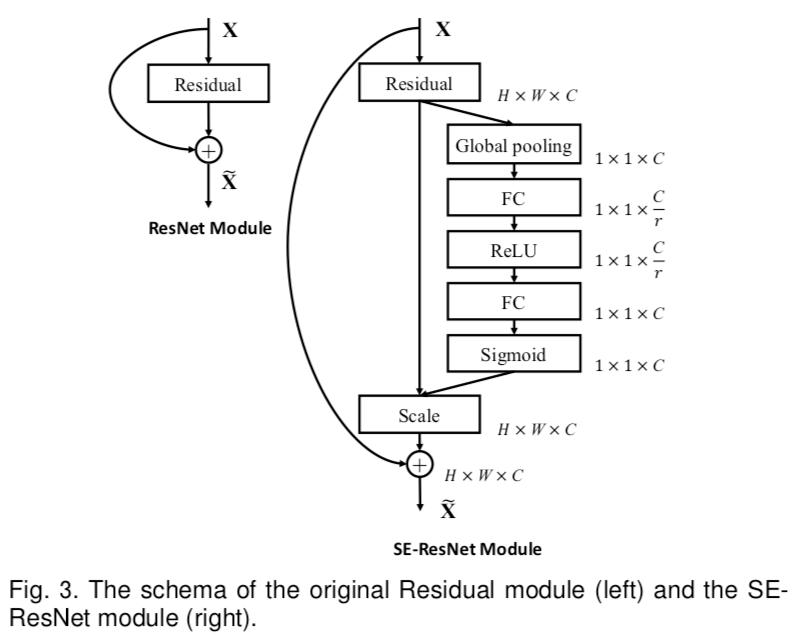

Model architecture

Image source: Squeeze-and-Excitation Networks

Image shows the architecture of SE block and where is it placed in ResNet bottleneck block.

Note that the SE-ResNeXt101-32x4d model can be deployed for inference on the NVIDIA Triton Inference Server using TorchScript, ONNX Runtime or TensorRT as an execution backend. For details check NGC.

Example

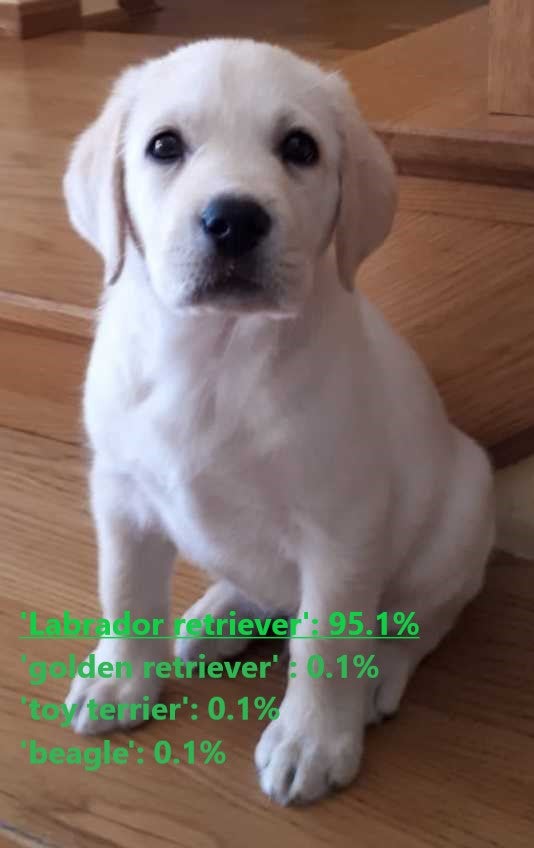

In the example below we will use the pretrained SE-ResNeXt101-32x4d model to perform inference on images and present the result.

To run the example you need some extra python packages installed. These are needed for preprocessing images and visualization.

!pip install validators matplotlib

import torch

from PIL import Image

import torchvision.transforms as transforms

import numpy as np

import json

import requests

import matplotlib.pyplot as plt

import warnings

warnings.filterwarnings('ignore')

%matplotlib inline

device = torch.device("cuda") if torch.cuda.is_available() else torch.device("cpu")

print(f'Using {device} for inference')

Load the model pretrained on ImageNet dataset.

resneXt = torch.hub.load('NVIDIA/DeepLearningExamples:torchhub', 'nvidia_se_resnext101_32x4d')

utils = torch.hub.load('NVIDIA/DeepLearningExamples:torchhub', 'nvidia_convnets_processing_utils')

resneXt.eval().to(device)

Prepare sample input data.

uris = [

'http://images.cocodataset.org/test-stuff2017/000000024309.jpg',

'http://images.cocodataset.org/test-stuff2017/000000028117.jpg',

'http://images.cocodataset.org/test-stuff2017/000000006149.jpg',

'http://images.cocodataset.org/test-stuff2017/000000004954.jpg',

]

batch = torch.cat(

[utils.prepare_input_from_uri(uri) for uri in uris]

).to(device)

Run inference. Use pick_n_best(predictions=output, n=topN) helper function to pick N most probable hypotheses according to the model.

with torch.no_grad():

output = torch.nn.functional.softmax(resneXt(batch), dim=1)

results = utils.pick_n_best(predictions=output, n=5)

Display the result.

for uri, result in zip(uris, results):

img = Image.open(requests.get(uri, stream=True).raw)

img.thumbnail((256,256), Image.ANTIALIAS)

plt.imshow(img)

plt.show()

print(result)

Details

For detailed information on model input and output, training recipies, inference and performance visit: github and/or NGC.

References