fcn_resnet50

-

torchvision.models.segmentation.fcn_resnet50(*, weights: Optional[torchvision.models.segmentation.fcn.FCN_ResNet50_Weights] = None, progress: bool = True, num_classes: Optional[int] = None, aux_loss: Optional[bool] = None, weights_backbone: Optional[torchvision.models.resnet.ResNet50_Weights] = ResNet50_Weights.IMAGENET1K_V1, **kwargs: Any) → torchvision.models.segmentation.fcn.FCN[source] Fully-Convolutional Network model with a ResNet-50 backbone from the Fully Convolutional Networks for Semantic Segmentation paper.

Warning

The segmentation module is in Beta stage, and backward compatibility is not guaranteed.

- Parameters

weights (

FCN_ResNet50_Weights, optional) – The pretrained weights to use. SeeFCN_ResNet50_Weightsbelow for more details, and possible values. By default, no pre-trained weights are used.progress (bool, optional) – If True, displays a progress bar of the download to stderr. Default is True.

num_classes (int, optional) – number of output classes of the model (including the background).

aux_loss (bool, optional) – If True, it uses an auxiliary loss.

weights_backbone (

ResNet50_Weights, optional) – The pretrained weights for the backbone.**kwargs – parameters passed to the

torchvision.models.segmentation.fcn.FCNbase class. Please refer to the source code for more details about this class.

-

class

torchvision.models.segmentation.FCN_ResNet50_Weights(value)[source] The model builder above accepts the following values as the

weightsparameter.FCN_ResNet50_Weights.DEFAULTis equivalent toFCN_ResNet50_Weights.COCO_WITH_VOC_LABELS_V1. You can also use strings, e.g.weights='DEFAULT'orweights='COCO_WITH_VOC_LABELS_V1'.FCN_ResNet50_Weights.COCO_WITH_VOC_LABELS_V1:

These weights were trained on a subset of COCO, using only the 20 categories that are present in the Pascal VOC dataset. Also available as

FCN_ResNet50_Weights.DEFAULT.miou (on COCO-val2017-VOC-labels)

60.5

pixel_acc (on COCO-val2017-VOC-labels)

91.4

categories

__background__, aeroplane, bicycle, … (18 omitted)

min_size

height=1, width=1

num_params

35322218

recipe

The inference transforms are available at

FCN_ResNet50_Weights.COCO_WITH_VOC_LABELS_V1.transformsand perform the following preprocessing operations: AcceptsPIL.Image, batched(B, C, H, W)and single(C, H, W)imagetorch.Tensorobjects. The images are resized toresize_size=[520]usinginterpolation=InterpolationMode.BILINEAR. Finally the values are first rescaled to[0.0, 1.0]and then normalized usingmean=[0.485, 0.456, 0.406]andstd=[0.229, 0.224, 0.225].

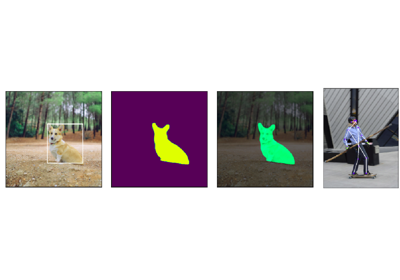

Examples using

fcn_resnet50: