ignite.contrib.handlers

Contribution module of handlers

custom_events

- class ignite.contrib.handlers.custom_events.CustomPeriodicEvent(n_iterations=None, n_epochs=None)[source]

DEPRECATED. Use filtered events instead. Handler to define a custom periodic events as a number of elapsed iterations/epochs for an engine.

When custom periodic event is created and attached to an engine, the following events are fired: 1) K iterations is specified: - Events.ITERATIONS_<K>_STARTED - Events.ITERATIONS_<K>_COMPLETED

1) K epochs is specified: - Events.EPOCHS_<K>_STARTED - Events.EPOCHS_<K>_COMPLETED

Examples:

from ignite.engine import Engine, Events from ignite.contrib.handlers import CustomPeriodicEvent # Let's define an event every 1000 iterations cpe1 = CustomPeriodicEvent(n_iterations=1000) cpe1.attach(trainer) # Let's define an event every 10 epochs cpe2 = CustomPeriodicEvent(n_epochs=10) cpe2.attach(trainer) @trainer.on(cpe1.Events.ITERATIONS_1000_COMPLETED) def on_every_1000_iterations(engine): # run a computation after 1000 iterations # ... print(engine.state.iterations_1000) @trainer.on(cpe2.Events.EPOCHS_10_STARTED) def on_every_10_epochs(engine): # run a computation every 10 epochs # ... print(engine.state.epochs_10)

param_scheduler

Concat a list of parameter schedulers. |

|

Anneals 'start_value' to 'end_value' over each cycle. |

|

An abstract class for updating an optimizer's parameter value over a cycle of some size. |

|

A wrapper class to call torch.optim.lr_scheduler objects as ignite handlers. |

|

Linearly adjusts param value to 'end_value' for a half-cycle, then linearly adjusts it back to 'start_value' for a half-cycle. |

|

Scheduler helper to group multiple schedulers into one. |

|

An abstract class for updating an optimizer's parameter value during training. |

|

Piecewise linear parameter scheduler |

|

Helper method to create a learning rate scheduler with a linear warm-up. |

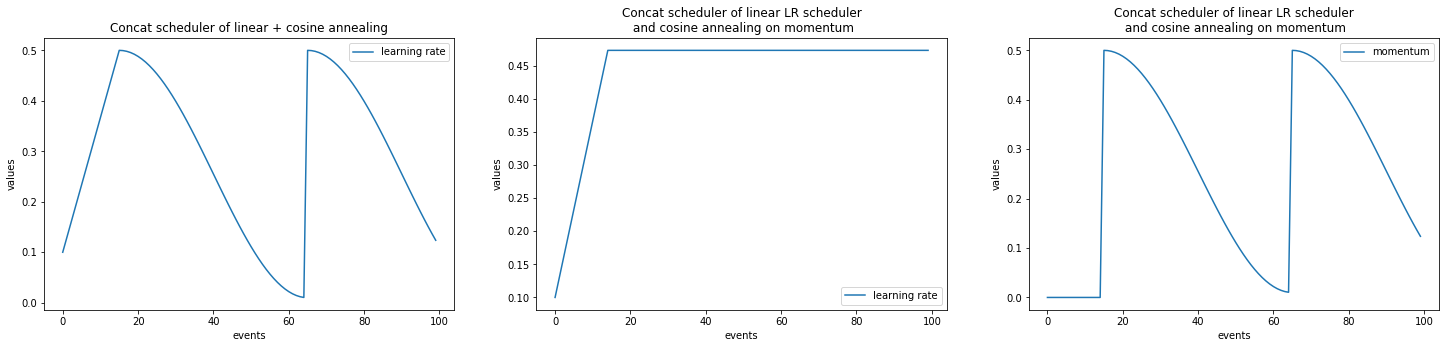

- class ignite.contrib.handlers.param_scheduler.ConcatScheduler(schedulers, durations, save_history=False)[source]

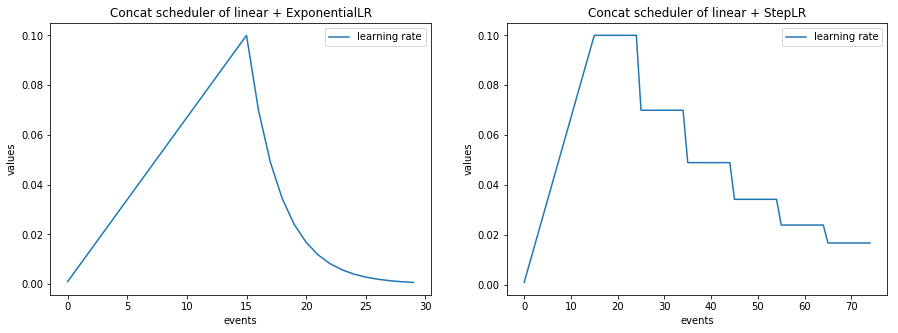

Concat a list of parameter schedulers.

The ConcatScheduler goes through a list of schedulers given by schedulers. Duration of each scheduler is defined by durations list of integers.

- Parameters

schedulers (List[ParamScheduler]) – list of parameter schedulers.

durations (List[int]) – list of number of events that lasts a parameter scheduler from schedulers.

save_history (bool) – whether to log the parameter values to engine.state.param_history, (default=False).

Examples:

from ignite.contrib.handlers.param_scheduler import ConcatScheduler from ignite.contrib.handlers.param_scheduler import LinearCyclicalScheduler from ignite.contrib.handlers.param_scheduler import CosineAnnealingScheduler scheduler_1 = LinearCyclicalScheduler(optimizer, "lr", start_value=0.1, end_value=0.5, cycle_size=60) scheduler_2 = CosineAnnealingScheduler(optimizer, "lr", start_value=0.5, end_value=0.01, cycle_size=60) combined_scheduler = ConcatScheduler(schedulers=[scheduler_1, scheduler_2], durations=[30, ]) trainer.add_event_handler(Events.ITERATION_STARTED, combined_scheduler) # # Sets the Learning rate linearly from 0.1 to 0.5 over 30 iterations. Then # starts an annealing schedule from 0.5 to 0.01 over 60 iterations. # The annealing cycles are repeated indefinitely. #

- get_param()[source]

Method to get current optimizer’s parameter values

- load_state_dict(state_dict)[source]

Copies parameters from

state_dictinto this ConcatScheduler.- Parameters

state_dict (Mapping) – a dict containing parameters.

- Return type

None

- classmethod simulate_values(num_events, schedulers, durations, param_names=None)[source]

Method to simulate scheduled values during num_events events.

- Parameters

num_events (int) – number of events during the simulation.

schedulers (List[ParamScheduler]) – list of parameter schedulers.

durations (List[int]) – list of number of events that lasts a parameter scheduler from schedulers.

param_names (Optional[Union[List[str], Tuple[str]]]) – parameter name or list of parameter names to simulate values. By default, the first scheduler’s parameter name is taken.

- Returns

list of [event_index, value_0, value_1, …], where values correspond to param_names.

- Return type

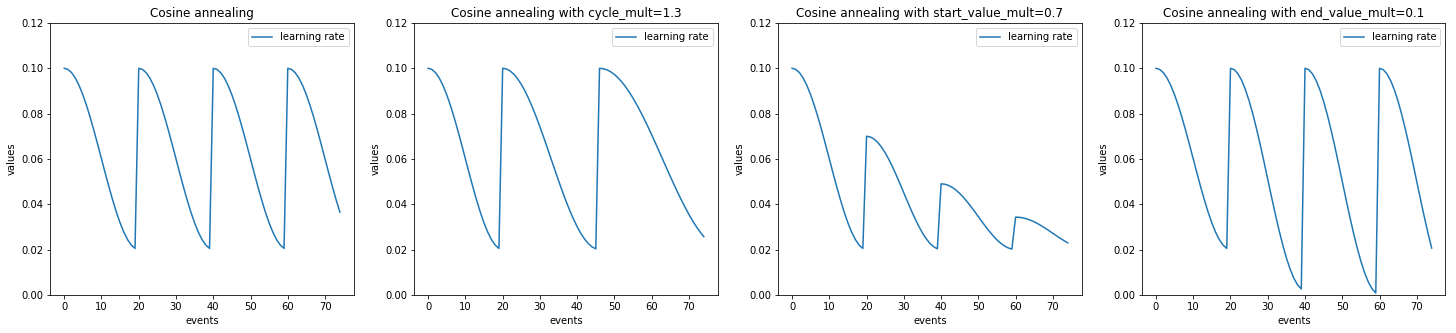

- class ignite.contrib.handlers.param_scheduler.CosineAnnealingScheduler(optimizer, param_name, start_value, end_value, cycle_size, cycle_mult=1.0, start_value_mult=1.0, end_value_mult=1.0, save_history=False, param_group_index=None)[source]

Anneals ‘start_value’ to ‘end_value’ over each cycle.

The annealing takes the form of the first half of a cosine wave (as suggested in [Smith17]).

- Parameters

optimizer (Optimizer) – torch optimizer or any object with attribute

param_groupsas a sequence.param_name (str) – name of optimizer’s parameter to update.

start_value (float) – value at start of cycle.

end_value (float) – value at the end of the cycle.

cycle_size (int) – length of cycle.

cycle_mult (float) – ratio by which to change the cycle_size at the end of each cycle (default=1).

start_value_mult (float) – ratio by which to change the start value at the end of each cycle (default=1.0).

end_value_mult (float) – ratio by which to change the end value at the end of each cycle (default=1.0).

save_history (bool) – whether to log the parameter values to engine.state.param_history, (default=False).

param_group_index (Optional[int]) – optimizer’s parameters group to use.

Note

If the scheduler is bound to an ‘ITERATION_*’ event, ‘cycle_size’ should usually be the number of batches in an epoch.

Examples:

from ignite.contrib.handlers.param_scheduler import CosineAnnealingScheduler scheduler = CosineAnnealingScheduler(optimizer, 'lr', 1e-1, 1e-3, len(train_loader)) trainer.add_event_handler(Events.ITERATION_STARTED, scheduler) # # Anneals the learning rate from 1e-1 to 1e-3 over the course of 1 epoch. #

from ignite.contrib.handlers.param_scheduler import CosineAnnealingScheduler from ignite.contrib.handlers.param_scheduler import LinearCyclicalScheduler optimizer = SGD( [ {"params": model.base.parameters(), 'lr': 0.001}, {"params": model.fc.parameters(), 'lr': 0.01}, ] ) scheduler1 = LinearCyclicalScheduler(optimizer, 'lr', 1e-7, 1e-5, len(train_loader), param_group_index=0) trainer.add_event_handler(Events.ITERATION_STARTED, scheduler1, "lr (base)") scheduler2 = CosineAnnealingScheduler(optimizer, 'lr', 1e-5, 1e-3, len(train_loader), param_group_index=1) trainer.add_event_handler(Events.ITERATION_STARTED, scheduler2, "lr (fc)")

- Smith17

Smith, Leslie N. “Cyclical learning rates for training neural networks.” Applications of Computer Vision (WACV), 2017 IEEE Winter Conference on. IEEE, 2017

- class ignite.contrib.handlers.param_scheduler.CyclicalScheduler(optimizer, param_name, start_value, end_value, cycle_size, cycle_mult=1.0, start_value_mult=1.0, end_value_mult=1.0, save_history=False, param_group_index=None)[source]

An abstract class for updating an optimizer’s parameter value over a cycle of some size.

- Parameters

optimizer (Optimizer) – torch optimizer or any object with attribute

param_groupsas a sequence.param_name (str) – name of optimizer’s parameter to update.

start_value (float) – value at start of cycle.

end_value (float) – value at the middle of the cycle.

cycle_size (int) – length of cycle, value should be larger than 1.

cycle_mult (float) – ratio by which to change the cycle_size. at the end of each cycle (default=1.0).

start_value_mult (float) – ratio by which to change the start value at the end of each cycle (default=1.0).

end_value_mult (float) – ratio by which to change the end value at the end of each cycle (default=1.0).

save_history (bool) – whether to log the parameter values to engine.state.param_history, (default=False).

param_group_index (Optional[int]) – optimizer’s parameters group to use.

Note

If the scheduler is bound to an ‘ITERATION_*’ event, ‘cycle_size’ should usually be the number of batches in an epoch.

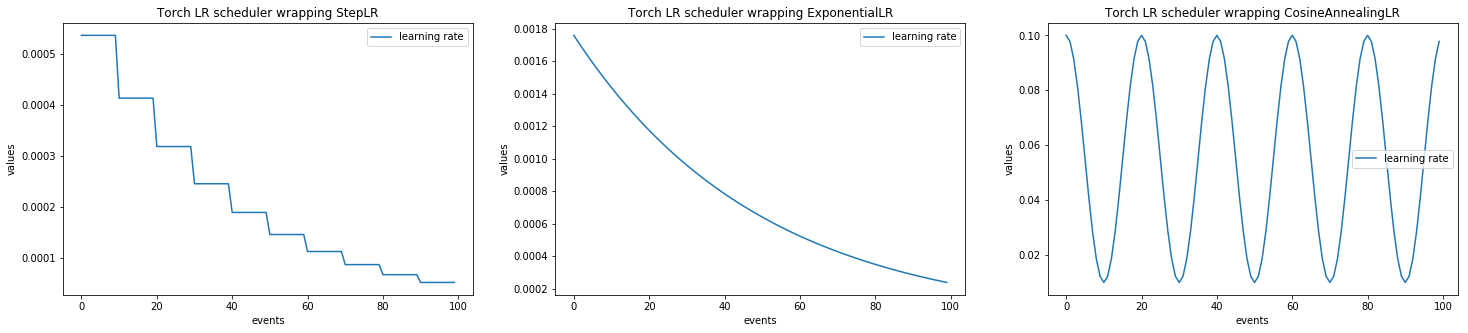

- class ignite.contrib.handlers.param_scheduler.LRScheduler(lr_scheduler, save_history=False)[source]

A wrapper class to call torch.optim.lr_scheduler objects as ignite handlers.

- Parameters

lr_scheduler (_LRScheduler) – lr_scheduler object to wrap.

save_history (bool) – whether to log the parameter values to engine.state.param_history, (default=False).

from ignite.contrib.handlers.param_scheduler import LRScheduler from torch.optim.lr_scheduler import StepLR step_scheduler = StepLR(optimizer, step_size=3, gamma=0.1) scheduler = LRScheduler(step_scheduler) # In this example, we assume to have installed PyTorch>=1.1.0 # (with new `torch.optim.lr_scheduler` behaviour) and # we attach scheduler to Events.ITERATION_COMPLETED # instead of Events.ITERATION_STARTED to make sure to use # the first lr value from the optimizer, otherwise it is will be skipped: trainer.add_event_handler(Events.ITERATION_COMPLETED, scheduler)

- get_param()[source]

Method to get current optimizer’s parameter value

- classmethod simulate_values(num_events, lr_scheduler, **kwargs)[source]

Method to simulate scheduled values during num_events events.

- class ignite.contrib.handlers.param_scheduler.LinearCyclicalScheduler(optimizer, param_name, start_value, end_value, cycle_size, cycle_mult=1.0, start_value_mult=1.0, end_value_mult=1.0, save_history=False, param_group_index=None)[source]

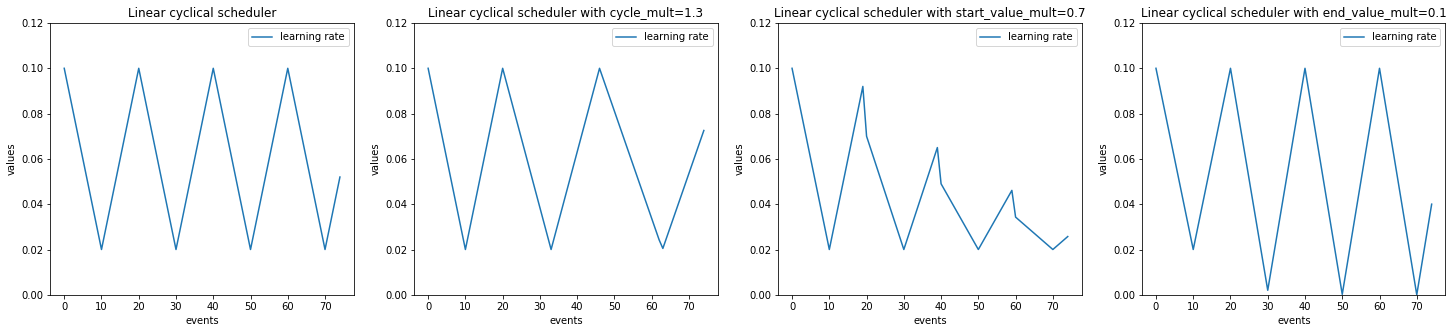

Linearly adjusts param value to ‘end_value’ for a half-cycle, then linearly adjusts it back to ‘start_value’ for a half-cycle.

- Parameters

optimizer (Optimizer) – torch optimizer or any object with attribute

param_groupsas a sequence.param_name (str) – name of optimizer’s parameter to update.

start_value (float) – value at start of cycle.

end_value (float) – value at the middle of the cycle.

cycle_size (int) – length of cycle.

cycle_mult (float) – ratio by which to change the cycle_size at the end of each cycle (default=1).

start_value_mult (float) – ratio by which to change the start value at the end of each cycle (default=1.0).

end_value_mult (float) – ratio by which to change the end value at the end of each cycle (default=1.0).

save_history (bool) – whether to log the parameter values to engine.state.param_history, (default=False).

param_group_index (Optional[int]) – optimizer’s parameters group to use.

Note

If the scheduler is bound to an ‘ITERATION_*’ event, ‘cycle_size’ should usually be the number of batches in an epoch.

Examples:

from ignite.contrib.handlers.param_scheduler import LinearCyclicalScheduler scheduler = LinearCyclicalScheduler(optimizer, 'lr', 1e-3, 1e-1, len(train_loader)) trainer.add_event_handler(Events.ITERATION_STARTED, scheduler) # # Linearly increases the learning rate from 1e-3 to 1e-1 and back to 1e-3 # over the course of 1 epoch #

- class ignite.contrib.handlers.param_scheduler.ParamGroupScheduler(schedulers, names=None, save_history=False)[source]

Scheduler helper to group multiple schedulers into one.

- Parameters

optimizer = SGD( [ {"params": model.base.parameters(), 'lr': 0.001), {"params": model.fc.parameters(), 'lr': 0.01), ] ) scheduler1 = LinearCyclicalScheduler(optimizer, 'lr', 1e-7, 1e-5, len(train_loader), param_group_index=0) scheduler2 = CosineAnnealingScheduler(optimizer, 'lr', 1e-5, 1e-3, len(train_loader), param_group_index=1) lr_schedulers = [scheduler1, scheduler2] names = ["lr (base)", "lr (fc)"] scheduler = ParamGroupScheduler(schedulers=lr_schedulers, names=names) # Attach single scheduler to the trainer trainer.add_event_handler(Events.ITERATION_STARTED, scheduler)

- load_state_dict(state_dict)[source]

Copies parameters from

state_dictinto this ParamScheduler.- Parameters

state_dict (Mapping) – a dict containing parameters.

- Return type

None

- classmethod simulate_values(num_events, schedulers, **kwargs)[source]

Method to simulate scheduled values during num_events events.

- Parameters

num_events (int) – number of events during the simulation.

schedulers (List[_LRScheduler]) – lr_scheduler object to wrap.

kwargs (Any) – kwargs passed to construct an instance of

ignite.contrib.handlers.param_scheduler.ParamGroupScheduler.

- Returns

event_index, value

- Return type

- class ignite.contrib.handlers.param_scheduler.ParamScheduler(optimizer, param_name, save_history=False, param_group_index=None)[source]

An abstract class for updating an optimizer’s parameter value during training.

- Parameters

optimizer (Optimizer) – torch optimizer or any object with attribute

param_groupsas a sequence.param_name (str) – name of optimizer’s parameter to update.

save_history (bool) – whether to log the parameter values to engine.state.param_history, (default=False).

param_group_index (Optional[int]) – optimizer’s parameters group to use

Note

Parameter scheduler works independently of the internal state of the attached optimizer. More precisely, whatever the state of the optimizer (newly created or used by another scheduler) the scheduler sets defined absolute values.

- abstract get_param()[source]

Method to get current optimizer’s parameter values

- load_state_dict(state_dict)[source]

Copies parameters from

state_dictinto this ParamScheduler.- Parameters

state_dict (Mapping) – a dict containing parameters.

- Return type

None

- classmethod plot_values(num_events, **scheduler_kwargs)[source]

Method to plot simulated scheduled values during num_events events.

This class requires matplotlib package to be installed:

pip install matplotlib

- Parameters

- Returns

matplotlib.lines.Line2D

- Return type

Examples

import matplotlib.pylab as plt plt.figure(figsize=(10, 7)) LinearCyclicalScheduler.plot_values(num_events=50, param_name='lr', start_value=1e-1, end_value=1e-3, cycle_size=10))

- classmethod simulate_values(num_events, **scheduler_kwargs)[source]

Method to simulate scheduled values during num_events events.

- Parameters

- Returns

event_index, value

- Return type

Examples:

lr_values = np.array(LinearCyclicalScheduler.simulate_values(num_events=50, param_name='lr', start_value=1e-1, end_value=1e-3, cycle_size=10)) plt.plot(lr_values[:, 0], lr_values[:, 1], label="learning rate") plt.xlabel("events") plt.ylabel("values") plt.legend()

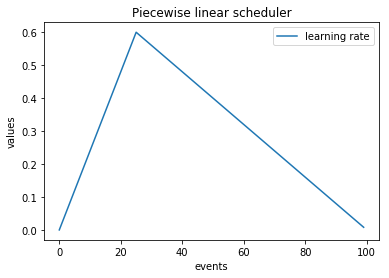

- class ignite.contrib.handlers.param_scheduler.PiecewiseLinear(optimizer, param_name, milestones_values, save_history=False, param_group_index=None)[source]

Piecewise linear parameter scheduler

- Parameters

optimizer (Optimizer) – torch optimizer or any object with attribute

param_groupsas a sequence.param_name (str) – name of optimizer’s parameter to update.

milestones_values (List[Tuple[int, float]]) – list of tuples (event index, parameter value) represents milestones and parameter. Milestones should be increasing integers.

save_history (bool) – whether to log the parameter values to engine.state.param_history, (default=False).

param_group_index (Optional[int]) – optimizer’s parameters group to use.

scheduler = PiecewiseLinear(optimizer, "lr", milestones_values=[(10, 0.5), (20, 0.45), (21, 0.3), (30, 0.1), (40, 0.1)]) # Attach to the trainer trainer.add_event_handler(Events.ITERATION_STARTED, scheduler) # # Sets the learning rate to 0.5 over the first 10 iterations, then decreases linearly from 0.5 to 0.45 between # 10th and 20th iterations. Next there is a jump to 0.3 at the 21st iteration and LR decreases linearly # from 0.3 to 0.1 between 21st and 30th iterations and remains 0.1 until the end of the iterations. #

- ignite.contrib.handlers.param_scheduler.create_lr_scheduler_with_warmup(lr_scheduler, warmup_start_value, warmup_duration, warmup_end_value=None, save_history=False, output_simulated_values=None)[source]

Helper method to create a learning rate scheduler with a linear warm-up.

- Parameters

lr_scheduler (Union[ParamScheduler, _LRScheduler]) – learning rate scheduler after the warm-up.

warmup_start_value (float) – learning rate start value of the warm-up phase.

warmup_duration (int) – warm-up phase duration, number of events.

warmup_end_value (Optional[float]) – learning rate end value of the warm-up phase, (default=None). If None, warmup_end_value is set to optimizer initial lr.

save_history (bool) – whether to log the parameter values to engine.state.param_history, (default=False).

output_simulated_values (Optional[List]) – optional output of simulated learning rate values. If output_simulated_values is a list of None, e.g. [None] * 100, after the execution it will be filled by 100 simulated learning rate values.

- Returns

ConcatScheduler

- Return type

Note

If the first learning rate value provided by lr_scheduler is different from warmup_end_value, an additional event is added after the warm-up phase such that the warm-up ends with warmup_end_value value and then lr_scheduler provides its learning rate values as normally.

Examples

torch_lr_scheduler = ExponentialLR(optimizer=optimizer, gamma=0.98) lr_values = [None] * 100 scheduler = create_lr_scheduler_with_warmup(torch_lr_scheduler, warmup_start_value=0.0, warmup_end_value=0.1, warmup_duration=10, output_simulated_values=lr_values) lr_values = np.array(lr_values) # Plot simulated values plt.plot(lr_values[:, 0], lr_values[:, 1], label="learning rate") # Attach to the trainer trainer.add_event_handler(Events.ITERATION_STARTED, scheduler)

lr_finder

Learning rate finder handler for supervised trainers. |

- class ignite.contrib.handlers.lr_finder.FastaiLRFinder[source]

Learning rate finder handler for supervised trainers.

While attached, the handler increases the learning rate in between two boundaries in a linear or exponential manner. It provides valuable information on how well the network can be trained over a range of learning rates and what can be an optimal learning rate.

Examples:

from ignite.contrib.handlers import FastaiLRFinder trainer = ... model = ... optimizer = ... lr_finder = FastaiLRFinder() to_save = {"model": model, "optimizer": optimizer} with lr_finder.attach(trainer, to_save=to_save) as trainer_with_lr_finder: trainer_with_lr_finder.run(dataloader) # Get lr_finder results lr_finder.get_results() # Plot lr_finder results (requires matplotlib) lr_finder.plot() # get lr_finder suggestion for lr lr_finder.lr_suggestion()

Note

When context manager is exited all LR finder’s handlers are removed.

Note

Please, also keep in mind that all other handlers attached the trainer will be executed during LR finder’s run.

Note

This class may require matplotlib package to be installed to plot learning rate range test:

pip install matplotlib

References

Cyclical Learning Rates for Training Neural Networks: https://arxiv.org/abs/1506.01186

fastai/lr_find: https://github.com/fastai/fastai

- attach(trainer, to_save, output_transform=<function FastaiLRFinder.<lambda>>, num_iter=None, end_lr=10.0, step_mode='exp', smooth_f=0.05, diverge_th=5.0)[source]

Attaches lr_finder to a given trainer. It also resets model and optimizer at the end of the run.

Usage:

to_save = {"model": model, "optimizer": optimizer} with lr_finder.attach(trainer, to_save=to_save) as trainer_with_lr_finder: trainer_with_lr_finder.run(dataloader)`- Parameters

trainer (Engine) – lr_finder is attached to this trainer. Please, keep in mind that all attached handlers will be executed.

to_save (Mapping) – dictionary with optimizer and other objects that needs to be restored after running the LR finder. For example, to_save={‘optimizer’: optimizer, ‘model’: model}. All objects should implement state_dict and load_state_dict methods.

output_transform (Callable) – function that transforms the trainer’s state.output after each iteration. It must return the loss of that iteration.

num_iter (Optional[int]) – number of iterations for lr schedule between base lr and end_lr. Default, it will run for trainer.state.epoch_length * trainer.state.max_epochs.

end_lr (float) – upper bound for lr search. Default, 10.0.

step_mode (str) – “exp” or “linear”, which way should the lr be increased from optimizer’s initial lr to end_lr. Default, “exp”.

smooth_f (float) – loss smoothing factor in range [0, 1). Default, 0.05

diverge_th (float) – Used for stopping the search when current loss > diverge_th * best_loss. Default, 5.0.

- Returns

trainer_with_lr_finder (trainer used for finding the lr)

- Return type

Note

lr_finder cannot be attached to more than one trainer at a time.

- get_results()[source]

Returns: dictionary with loss and lr logs fromm the previous run

- plot(skip_start=10, skip_end=5, log_lr=True)[source]

Plots the learning rate range test.

This method requires matplotlib package to be installed:

pip install matplotlib

time_profilers

BasicTimeProfiler can be used to profile the handlers, events, data loading and data processing times. |

|

HandlersTimeProfiler can be used to profile the handlers, data loading and data processing times. |

- class ignite.contrib.handlers.time_profilers.BasicTimeProfiler[source]

BasicTimeProfiler can be used to profile the handlers, events, data loading and data processing times.

Examples:

from ignite.contrib.handlers import BasicTimeProfiler trainer = Engine(train_updater) # Create an object of the profiler and attach an engine to it profiler = BasicTimeProfiler() profiler.attach(trainer) @trainer.on(Events.EPOCH_COMPLETED) def log_intermediate_results(): profiler.print_results(profiler.get_results()) trainer.run(dataloader, max_epochs=3) profiler.write_results('path_to_dir/time_profiling.csv')

- get_results()[source]

Method to fetch the aggregated profiler results after the engine is run

results = profiler.get_results()

- static print_results(results)[source]

Method to print the aggregated results from the profiler

profiler.print_results(results)

Example output:

---------------------------------------------------- | Time profiling stats (in seconds): | ---------------------------------------------------- total | min/index | max/index | mean | std Processing function: 157.46292 | 0.01452/1501 | 0.26905/0 | 0.07730 | 0.01258 Dataflow: 6.11384 | 0.00008/1935 | 0.28461/1551 | 0.00300 | 0.02693 Event handlers: 2.82721 - Events.STARTED: [] 0.00000 - Events.EPOCH_STARTED: [] 0.00006 | 0.00000/0 | 0.00000/17 | 0.00000 | 0.00000 - Events.ITERATION_STARTED: ['PiecewiseLinear'] 0.03482 | 0.00001/188 | 0.00018/679 | 0.00002 | 0.00001 - Events.ITERATION_COMPLETED: ['TerminateOnNan'] 0.20037 | 0.00006/866 | 0.00089/1943 | 0.00010 | 0.00003 - Events.EPOCH_COMPLETED: ['empty_cuda_cache', 'training.<locals>.log_elapsed_time', ] 2.57860 | 0.11529/0 | 0.14977/13 | 0.12893 | 0.00790 - Events.COMPLETED: [] not yet triggered

- write_results(output_path)[source]

Method to store the unaggregated profiling results to a csv file

- Parameters

output_path (str) – file output path containing a filename

- Return type

None

profiler.write_results('path_to_dir/awesome_filename.csv')

Example output:

----------------------------------------------------------------- epoch iteration processing_stats dataflow_stats Event_STARTED ... 1.0 1.0 0.00003 0.252387 0.125676 1.0 2.0 0.00029 0.252342 0.125123

- class ignite.contrib.handlers.time_profilers.HandlersTimeProfiler[source]

HandlersTimeProfiler can be used to profile the handlers, data loading and data processing times. Custom events are also profiled by this profiler

Examples:

from ignite.contrib.handlers import HandlersTimeProfiler trainer = Engine(train_updater) # Create an object of the profiler and attach an engine to it profiler = HandlersTimeProfiler() profiler.attach(trainer) @trainer.on(Events.EPOCH_COMPLETED) def log_intermediate_results(): profiler.print_results(profiler.get_results()) trainer.run(dataloader, max_epochs=3) profiler.write_results('path_to_dir/time_profiling.csv')

- attach(engine)[source]

Attach HandlersTimeProfiler to the given engine.

- Parameters

engine (Engine) – the instance of Engine to attach

- Return type

None

- get_results()[source]

Method to fetch the aggregated profiler results after the engine is run

results = profiler.get_results()

- static print_results(results)[source]

Method to print the aggregated results from the profiler

- Parameters

results (List[List[Union[str, float]]]) – the aggregated results from the profiler

- Return type

None

profiler.print_results(results)

Example output:

----------------------------------------- ----------------------- -------------- ... Handler Event Name Total(s) ----------------------------------------- ----------------------- -------------- run.<locals>.log_training_results EPOCH_COMPLETED 19.43245 run.<locals>.log_validation_results EPOCH_COMPLETED 2.55271 run.<locals>.log_time EPOCH_COMPLETED 0.00049 run.<locals>.log_intermediate_results EPOCH_COMPLETED 0.00106 run.<locals>.log_training_loss ITERATION_COMPLETED 0.059 run.<locals>.log_time COMPLETED not triggered ----------------------------------------- ----------------------- -------------- Total 22.04571 ----------------------------------------- ----------------------- -------------- Processing took total 11.29543s [min/index: 0.00393s/1875, max/index: 0.00784s/0, mean: 0.00602s, std: 0.00034s] Dataflow took total 16.24365s [min/index: 0.00533s/1874, max/index: 0.01129s/937, mean: 0.00866s, std: 0.00113s]

- write_results(output_path)[source]

Method to store the unaggregated profiling results to a csv file

- Parameters

output_path (str) – file output path containing a filename

- Return type

None

profiler.write_results('path_to_dir/awesome_filename.csv')

Example output:

----------------------------------------------------------------- # processing_stats dataflow_stats training.<locals>.log_elapsed_time (EPOCH_COMPLETED) ... 1 0.00003 0.252387 0.125676 2 0.00029 0.252342 0.125123

stores

EpochOutputStore handler to save output prediction and target history after every epoch, could be useful for e.g., visualization purposes. |

- class ignite.contrib.handlers.stores.EpochOutputStore(output_transform=<function EpochOutputStore.<lambda>>)[source]

EpochOutputStore handler to save output prediction and target history after every epoch, could be useful for e.g., visualization purposes.

Note

This can potentially lead to a memory error if the output data is larger than available RAM.

- Parameters

output_transform (Callable) – a callable that is used to transform the

Engine’sprocess_function’s output , e.g., lambda x: x[0]

Examples:

eos = EpochOutputStore() trainer = create_supervised_trainer(model, optimizer, loss) train_evaluator = create_supervised_evaluator(model, metrics) eos.attach(train_evaluator) @trainer.on(Events.EPOCH_COMPLETED) def log_training_results(engine): train_evaluator.run(train_loader) output = eos.data # do something with output, e.g., plotting

New in version 0.4.2.

tensorboard_logger

See tensorboardX mnist example and CycleGAN and EfficientNet notebooks for detailed usage.

TensorBoard handler to log metrics, model/optimizer parameters, gradients during the training and validation. |

|

Helper handler to log optimizer parameters |

|

Helper handler to log engine's output and/or metrics |

|

Helper handler to log model's weights as scalars. |

|

Helper handler to log model's weights as histograms. |

|

Helper handler to log model's gradients as scalars. |

|

Helper handler to log model's gradients as histograms. |

|

Helper method to setup global_step_transform function using another engine. |

- class ignite.contrib.handlers.tensorboard_logger.GradsHistHandler(model, tag=None)[source]

Helper handler to log model’s gradients as histograms.

Examples

from ignite.contrib.handlers.tensorboard_logger import * # Create a logger tb_logger = TensorboardLogger(log_dir="experiments/tb_logs") # Attach the logger to the trainer to log model's weights norm after each iteration tb_logger.attach( trainer, event_name=Events.ITERATION_COMPLETED, log_handler=GradsHistHandler(model) )

- class ignite.contrib.handlers.tensorboard_logger.GradsScalarHandler(model, reduction=<function norm>, tag=None)[source]

Helper handler to log model’s gradients as scalars. Handler iterates over the gradients of named parameters of the model, applies reduction function to each parameter produce a scalar and then logs the scalar.

Examples

from ignite.contrib.handlers.tensorboard_logger import * # Create a logger tb_logger = TensorboardLogger(log_dir="experiments/tb_logs") # Attach the logger to the trainer to log model's weights norm after each iteration tb_logger.attach( trainer, event_name=Events.ITERATION_COMPLETED, log_handler=GradsScalarHandler(model, reduction=torch.norm) )

- class ignite.contrib.handlers.tensorboard_logger.OptimizerParamsHandler(optimizer, param_name='lr', tag=None)[source]

Helper handler to log optimizer parameters

Examples

from ignite.contrib.handlers.tensorboard_logger import * # Create a logger tb_logger = TensorboardLogger(log_dir="experiments/tb_logs") # Attach the logger to the trainer to log optimizer's parameters, e.g. learning rate at each iteration tb_logger.attach( trainer, log_handler=OptimizerParamsHandler(optimizer), event_name=Events.ITERATION_STARTED ) # or equivalently tb_logger.attach_opt_params_handler( trainer, event_name=Events.ITERATION_STARTED, optimizer=optimizer )

- class ignite.contrib.handlers.tensorboard_logger.OutputHandler(tag, metric_names=None, output_transform=None, global_step_transform=None)[source]

Helper handler to log engine’s output and/or metrics

Examples

from ignite.contrib.handlers.tensorboard_logger import * # Create a logger tb_logger = TensorboardLogger(log_dir="experiments/tb_logs") # Attach the logger to the evaluator on the validation dataset and log NLL, Accuracy metrics after # each epoch. We setup `global_step_transform=global_step_from_engine(trainer)` to take the epoch # of the `trainer`: tb_logger.attach( evaluator, log_handler=OutputHandler( tag="validation", metric_names=["nll", "accuracy"], global_step_transform=global_step_from_engine(trainer) ), event_name=Events.EPOCH_COMPLETED ) # or equivalently tb_logger.attach_output_handler( evaluator, event_name=Events.EPOCH_COMPLETED, tag="validation", metric_names=["nll", "accuracy"], global_step_transform=global_step_from_engine(trainer) )

Another example, where model is evaluated every 500 iterations:

from ignite.contrib.handlers.tensorboard_logger import * @trainer.on(Events.ITERATION_COMPLETED(every=500)) def evaluate(engine): evaluator.run(validation_set, max_epochs=1) tb_logger = TensorboardLogger(log_dir="experiments/tb_logs") def global_step_transform(*args, **kwargs): return trainer.state.iteration # Attach the logger to the evaluator on the validation dataset and log NLL, Accuracy metrics after # every 500 iterations. Since evaluator engine does not have access to the training iteration, we # provide a global_step_transform to return the trainer.state.iteration for the global_step, each time # evaluator metrics are plotted on Tensorboard. tb_logger.attach_output_handler( evaluator, event_name=Events.EPOCH_COMPLETED, tag="validation", metrics=["nll", "accuracy"], global_step_transform=global_step_transform )

- Parameters

tag (str) – common title for all produced plots. For example, “training”

metric_names (Optional[List[str]]) – list of metric names to plot or a string “all” to plot all available metrics.

output_transform (Optional[Callable]) – output transform function to prepare engine.state.output as a number. For example, output_transform = lambda output: output This function can also return a dictionary, e.g {“loss”: loss1, “another_loss”: loss2} to label the plot with corresponding keys.

global_step_transform (Optional[Callable]) – global step transform function to output a desired global step. Input of the function is (engine, event_name). Output of function should be an integer. Default is None, global_step based on attached engine. If provided, uses function output as global_step. To setup global step from another engine, please use

global_step_from_engine().

Note

Example of global_step_transform:

def global_step_transform(engine, event_name): return engine.state.get_event_attrib_value(event_name)

- class ignite.contrib.handlers.tensorboard_logger.TensorboardLogger(*args, **kwargs)[source]

TensorBoard handler to log metrics, model/optimizer parameters, gradients during the training and validation.

By default, this class favors tensorboardX package if installed:

pip install tensorboardX

otherwise, it falls back to using PyTorch’s SummaryWriter (>=v1.2.0).

- Parameters

args (Any) – Positional arguments accepted from SummaryWriter.

kwargs (Any) –

Keyword arguments accepted from SummaryWriter. For example, log_dir to setup path to the directory where to log.

Examples

from ignite.contrib.handlers.tensorboard_logger import * # Create a logger tb_logger = TensorboardLogger(log_dir="experiments/tb_logs") # Attach the logger to the trainer to log training loss at each iteration tb_logger.attach_output_handler( trainer, event_name=Events.ITERATION_COMPLETED, tag="training", output_transform=lambda loss: {"loss": loss} ) # Attach the logger to the evaluator on the training dataset and log NLL, Accuracy metrics after each epoch # We setup `global_step_transform=global_step_from_engine(trainer)` to take the epoch # of the `trainer` instead of `train_evaluator`. tb_logger.attach_output_handler( train_evaluator, event_name=Events.EPOCH_COMPLETED, tag="training", metric_names=["nll", "accuracy"], global_step_transform=global_step_from_engine(trainer), ) # Attach the logger to the evaluator on the validation dataset and log NLL, Accuracy metrics after # each epoch. We setup `global_step_transform=global_step_from_engine(trainer)` to take the epoch of the # `trainer` instead of `evaluator`. tb_logger.attach_output_handler( evaluator, event_name=Events.EPOCH_COMPLETED, tag="validation", metric_names=["nll", "accuracy"], global_step_transform=global_step_from_engine(trainer)), ) # Attach the logger to the trainer to log optimizer's parameters, e.g. learning rate at each iteration tb_logger.attach_opt_params_handler( trainer, event_name=Events.ITERATION_STARTED, optimizer=optimizer, param_name='lr' # optional ) # Attach the logger to the trainer to log model's weights norm after each iteration tb_logger.attach( trainer, event_name=Events.ITERATION_COMPLETED, log_handler=WeightsScalarHandler(model) ) # Attach the logger to the trainer to log model's weights as a histogram after each epoch tb_logger.attach( trainer, event_name=Events.EPOCH_COMPLETED, log_handler=WeightsHistHandler(model) ) # Attach the logger to the trainer to log model's gradients norm after each iteration tb_logger.attach( trainer, event_name=Events.ITERATION_COMPLETED, log_handler=GradsScalarHandler(model) ) # Attach the logger to the trainer to log model's gradients as a histogram after each epoch tb_logger.attach( trainer, event_name=Events.EPOCH_COMPLETED, log_handler=GradsHistHandler(model) ) # We need to close the logger with we are done tb_logger.close()

It is also possible to use the logger as context manager:

from ignite.contrib.handlers.tensorboard_logger import * with TensorboardLogger(log_dir="experiments/tb_logs") as tb_logger: trainer = Engine(update_fn) # Attach the logger to the trainer to log training loss at each iteration tb_logger.attach_output_handler( trainer, event_name=Events.ITERATION_COMPLETED, tag="training", output_transform=lambda loss: {"loss": loss} )

- attach(engine, log_handler, event_name)

Attach the logger to the engine and execute log_handler function at event_name events.

- Parameters

engine (Engine) – engine object.

log_handler (Callable) – a logging handler to execute

event_name (Union[str, Events, CallableEventWithFilter, EventsList]) – event to attach the logging handler to. Valid events are from

EventsorEventsListor any event_name added byregister_events().

- Returns

RemovableEventHandle, which can be used to remove the handler.- Return type

- attach_opt_params_handler(engine, event_name, *args, **kwargs)

Shortcut method to attach OptimizerParamsHandler to the logger.

- Parameters

- Returns

RemovableEventHandle, which can be used to remove the handler.- Return type

Changed in version 0.4.3: Added missing return statement.

- attach_output_handler(engine, event_name, *args, **kwargs)

Shortcut method to attach OutputHandler to the logger.

- Parameters

- Returns

RemovableEventHandle, which can be used to remove the handler.- Return type

- class ignite.contrib.handlers.tensorboard_logger.WeightsHistHandler(model, tag=None)[source]

Helper handler to log model’s weights as histograms.

Examples

from ignite.contrib.handlers.tensorboard_logger import * # Create a logger tb_logger = TensorboardLogger(log_dir="experiments/tb_logs") # Attach the logger to the trainer to log model's weights norm after each iteration tb_logger.attach( trainer, event_name=Events.ITERATION_COMPLETED, log_handler=WeightsHistHandler(model) )

- class ignite.contrib.handlers.tensorboard_logger.WeightsScalarHandler(model, reduction=<function norm>, tag=None)[source]

Helper handler to log model’s weights as scalars. Handler iterates over named parameters of the model, applies reduction function to each parameter produce a scalar and then logs the scalar.

Examples

from ignite.contrib.handlers.tensorboard_logger import * # Create a logger tb_logger = TensorboardLogger(log_dir="experiments/tb_logs") # Attach the logger to the trainer to log model's weights norm after each iteration tb_logger.attach( trainer, event_name=Events.ITERATION_COMPLETED, log_handler=WeightsScalarHandler(model, reduction=torch.norm) )

- ignite.contrib.handlers.tensorboard_logger.global_step_from_engine(engine, custom_event_name=None)[source]

Helper method to setup global_step_transform function using another engine. This can be helpful for logging trainer epoch/iteration while output handler is attached to an evaluator.

visdom_logger

See visdom mnist example for detailed usage.

VisdomLogger handler to log metrics, model/optimizer parameters, gradients during the training and validation. |

|

Helper handler to log optimizer parameters |

|

Helper handler to log engine's output and/or metrics |

|

Helper handler to log model's weights as scalars. |

|

Helper handler to log model's gradients as scalars. |

|

Helper method to setup global_step_transform function using another engine. |

- class ignite.contrib.handlers.visdom_logger.GradsScalarHandler(model, reduction=<function norm>, tag=None, show_legend=False)[source]

Helper handler to log model’s gradients as scalars. Handler iterates over the gradients of named parameters of the model, applies reduction function to each parameter produce a scalar and then logs the scalar.

Examples

from ignite.contrib.handlers.visdom_logger import * # Create a logger vd_logger = VisdomLogger() # Attach the logger to the trainer to log model's weights norm after each iteration vd_logger.attach( trainer, event_name=Events.ITERATION_COMPLETED, log_handler=GradsScalarHandler(model, reduction=torch.norm) )

- Parameters

- add_scalar(logger, k, v, event_name, global_step)

Helper method to log a scalar with VisdomLogger.

- Parameters

logger (VisdomLogger) – visdom logger

k (str) – scalar name which is used to set window title and y-axis label

event_name (Any) – Event name which is used to setup x-axis label. Valid events are from

Eventsor any event_name added byregister_events().global_step (int) – global step, x-axis value

- Return type

None

- class ignite.contrib.handlers.visdom_logger.OptimizerParamsHandler(optimizer, param_name='lr', tag=None, show_legend=False)[source]

Helper handler to log optimizer parameters

Examples

from ignite.contrib.handlers.visdom_logger import * # Create a logger vb_logger = VisdomLogger() # Attach the logger to the trainer to log optimizer's parameters, e.g. learning rate at each iteration vd_logger.attach( trainer, log_handler=OptimizerParamsHandler(optimizer), event_name=Events.ITERATION_STARTED ) # or equivalently vd_logger.attach_opt_params_handler( trainer, event_name=Events.ITERATION_STARTED, optimizer=optimizer )

- Parameters

- add_scalar(logger, k, v, event_name, global_step)

Helper method to log a scalar with VisdomLogger.

- Parameters

logger (VisdomLogger) – visdom logger

k (str) – scalar name which is used to set window title and y-axis label

event_name (Any) – Event name which is used to setup x-axis label. Valid events are from

Eventsor any event_name added byregister_events().global_step (int) – global step, x-axis value

- Return type

None

- class ignite.contrib.handlers.visdom_logger.OutputHandler(tag, metric_names=None, output_transform=None, global_step_transform=None, show_legend=False)[source]

Helper handler to log engine’s output and/or metrics

Examples

from ignite.contrib.handlers.visdom_logger import * # Create a logger vd_logger = VisdomLogger() # Attach the logger to the evaluator on the validation dataset and log NLL, Accuracy metrics after # each epoch. We setup `global_step_transform=global_step_from_engine(trainer)` to take the epoch # of the `trainer`: vd_logger.attach( evaluator, log_handler=OutputHandler( tag="validation", metric_names=["nll", "accuracy"], global_step_transform=global_step_from_engine(trainer) ), event_name=Events.EPOCH_COMPLETED ) # or equivalently vd_logger.attach_output_handler( evaluator, event_name=Events.EPOCH_COMPLETED, tag="validation", metric_names=["nll", "accuracy"], global_step_transform=global_step_from_engine(trainer) )

Another example, where model is evaluated every 500 iterations:

from ignite.contrib.handlers.visdom_logger import * @trainer.on(Events.ITERATION_COMPLETED(every=500)) def evaluate(engine): evaluator.run(validation_set, max_epochs=1) vd_logger = VisdomLogger() def global_step_transform(*args, **kwargs): return trainer.state.iteration # Attach the logger to the evaluator on the validation dataset and log NLL, Accuracy metrics after # every 500 iterations. Since evaluator engine does not have access to the training iteration, we # provide a global_step_transform to return the trainer.state.iteration for the global_step, each time # evaluator metrics are plotted on Visdom. vd_logger.attach_output_handler( evaluator, event_name=Events.EPOCH_COMPLETED, tag="validation", metrics=["nll", "accuracy"], global_step_transform=global_step_transform )

- Parameters

tag (str) – common title for all produced plots. For example, “training”

metric_names (Optional[str]) – list of metric names to plot or a string “all” to plot all available metrics.

output_transform (Optional[Callable]) – output transform function to prepare engine.state.output as a number. For example, output_transform = lambda output: output This function can also return a dictionary, e.g {“loss”: loss1, “another_loss”: loss2} to label the plot with corresponding keys.

global_step_transform (Optional[Callable]) – global step transform function to output a desired global step. Input of the function is (engine, event_name). Output of function should be an integer. Default is None, global_step based on attached engine. If provided, uses function output as global_step. To setup global step from another engine, please use

global_step_from_engine().show_legend (bool) – flag to show legend in the window

Note

Example of global_step_transform:

def global_step_transform(engine, event_name): return engine.state.get_event_attrib_value(event_name)

- add_scalar(logger, k, v, event_name, global_step)

Helper method to log a scalar with VisdomLogger.

- Parameters

logger (VisdomLogger) – visdom logger

k (str) – scalar name which is used to set window title and y-axis label

event_name (Any) – Event name which is used to setup x-axis label. Valid events are from

Eventsor any event_name added byregister_events().global_step (int) – global step, x-axis value

- Return type

None

- class ignite.contrib.handlers.visdom_logger.VisdomLogger(server=None, port=None, num_workers=1, raise_exceptions=True, **kwargs)[source]

VisdomLogger handler to log metrics, model/optimizer parameters, gradients during the training and validation.

This class requires visdom package to be installed:

pip install git+https://github.com/facebookresearch/visdom.git

- Parameters

server (Optional[str]) – visdom server URL. It can be also specified by environment variable VISDOM_SERVER_URL

port (Optional[int]) – visdom server’s port. It can be also specified by environment variable VISDOM_PORT

num_workers (int) – number of workers to use in concurrent.futures.ThreadPoolExecutor to post data to visdom server. Default, num_workers=1. If num_workers=0 and logger uses the main thread. If using Python 2.7 and num_workers>0 the package futures should be installed: pip install futures

kwargs (Any) – kwargs to pass into visdom.Visdom.

raise_exceptions (bool) –

Note

We can also specify username/password using environment variables: VISDOM_USERNAME, VISDOM_PASSWORD

Warning

Frequent logging, e.g. when logger is attached to Events.ITERATION_COMPLETED, can slow down the run if the main thread is used to send the data to visdom server (num_workers=0). To avoid this situation we can either log less frequently or set num_workers=1.

Examples

from ignite.contrib.handlers.visdom_logger import * # Create a logger vd_logger = VisdomLogger() # Attach the logger to the trainer to log training loss at each iteration vd_logger.attach_output_handler( trainer, event_name=Events.ITERATION_COMPLETED, tag="training", output_transform=lambda loss: {"loss": loss} ) # Attach the logger to the evaluator on the training dataset and log NLL, Accuracy metrics after each epoch # We setup `global_step_transform=global_step_from_engine(trainer)` to take the epoch # of the `trainer` instead of `train_evaluator`. vd_logger.attach_output_handler( train_evaluator, event_name=Events.EPOCH_COMPLETED, tag="training", metric_names=["nll", "accuracy"], global_step_transform=global_step_from_engine(trainer), ) # Attach the logger to the evaluator on the validation dataset and log NLL, Accuracy metrics after # each epoch. We setup `global_step_transform=global_step_from_engine(trainer)` to take the epoch of the # `trainer` instead of `evaluator`. vd_logger.attach_output_handler( evaluator, event_name=Events.EPOCH_COMPLETED, tag="validation", metric_names=["nll", "accuracy"], global_step_transform=global_step_from_engine(trainer)), ) # Attach the logger to the trainer to log optimizer's parameters, e.g. learning rate at each iteration vd_logger.attach_opt_params_handler( trainer, event_name=Events.ITERATION_STARTED, optimizer=optimizer, param_name='lr' # optional ) # Attach the logger to the trainer to log model's weights norm after each iteration vd_logger.attach( trainer, event_name=Events.ITERATION_COMPLETED, log_handler=WeightsScalarHandler(model) ) # Attach the logger to the trainer to log model's gradients norm after each iteration vd_logger.attach( trainer, event_name=Events.ITERATION_COMPLETED, log_handler=GradsScalarHandler(model) ) # We need to close the logger with we are done vd_logger.close()

It is also possible to use the logger as context manager:

from ignite.contrib.handlers.visdom_logger import * with VisdomLogger() as vd_logger: trainer = Engine(update_fn) # Attach the logger to the trainer to log training loss at each iteration vd_logger.attach_output_handler( trainer, event_name=Events.ITERATION_COMPLETED, tag="training", output_transform=lambda loss: {"loss": loss} )

- attach(engine, log_handler, event_name)

Attach the logger to the engine and execute log_handler function at event_name events.

- Parameters

engine (Engine) – engine object.

log_handler (Callable) – a logging handler to execute

event_name (Union[str, Events, CallableEventWithFilter, EventsList]) – event to attach the logging handler to. Valid events are from

EventsorEventsListor any event_name added byregister_events().

- Returns

RemovableEventHandle, which can be used to remove the handler.- Return type

- attach_opt_params_handler(engine, event_name, *args, **kwargs)

Shortcut method to attach OptimizerParamsHandler to the logger.

- Parameters

- Returns

RemovableEventHandle, which can be used to remove the handler.- Return type

Changed in version 0.4.3: Added missing return statement.

- attach_output_handler(engine, event_name, *args, **kwargs)

Shortcut method to attach OutputHandler to the logger.

- Parameters

- Returns

RemovableEventHandle, which can be used to remove the handler.- Return type

- class ignite.contrib.handlers.visdom_logger.WeightsScalarHandler(model, reduction=<function norm>, tag=None, show_legend=False)[source]

Helper handler to log model’s weights as scalars. Handler iterates over named parameters of the model, applies reduction function to each parameter produce a scalar and then logs the scalar.

Examples

from ignite.contrib.handlers.visdom_logger import * # Create a logger vd_logger = VisdomLogger() # Attach the logger to the trainer to log model's weights norm after each iteration vd_logger.attach( trainer, event_name=Events.ITERATION_COMPLETED, log_handler=WeightsScalarHandler(model, reduction=torch.norm) )

- Parameters

- add_scalar(logger, k, v, event_name, global_step)

Helper method to log a scalar with VisdomLogger.

- Parameters

logger (VisdomLogger) – visdom logger

k (str) – scalar name which is used to set window title and y-axis label

event_name (Any) – Event name which is used to setup x-axis label. Valid events are from

Eventsor any event_name added byregister_events().global_step (int) – global step, x-axis value

- Return type

None

- ignite.contrib.handlers.visdom_logger.global_step_from_engine(engine, custom_event_name=None)[source]

Helper method to setup global_step_transform function using another engine. This can be helpful for logging trainer epoch/iteration while output handler is attached to an evaluator.

neptune_logger

See neptune mnist example for detailed usage.

Neptune handler to log metrics, model/optimizer parameters, gradients during the training and validation. |

|

Handler that saves input checkpoint to the Neptune server. |

|

Helper handler to log optimizer parameters |

|

Helper handler to log engine's output and/or metrics |

|

Helper handler to log model's weights as scalars. |

|

Helper handler to log model's gradients as scalars. |

|

Helper method to setup global_step_transform function using another engine. |

- class ignite.contrib.handlers.neptune_logger.GradsScalarHandler(model, reduction=<function norm>, tag=None)[source]

Helper handler to log model’s gradients as scalars. Handler iterates over the gradients of named parameters of the model, applies reduction function to each parameter produce a scalar and then logs the scalar.

Examples

from ignite.contrib.handlers.neptune_logger import * # Create a logger # We are using the api_token for the anonymous user neptuner but you can use your own. npt_logger = NeptuneLogger( api_token="ANONYMOUS", project_name="shared/pytorch-ignite-integration", experiment_name="cnn-mnist", # Optional, params={"max_epochs": 10}, # Optional, tags=["pytorch-ignite","minst"] # Optional ) # Attach the logger to the trainer to log model's weights norm after each iteration npt_logger.attach( trainer, event_name=Events.ITERATION_COMPLETED, log_handler=GradsScalarHandler(model, reduction=torch.norm) )

- class ignite.contrib.handlers.neptune_logger.NeptuneLogger(*args, **kwargs)[source]

Neptune handler to log metrics, model/optimizer parameters, gradients during the training and validation. It can also log model checkpoints to Neptune server.

pip install neptune-client

- Parameters

api_token – Required in online mode. Neputne API token, found on https://neptune.ai. Read how to get your API key https://docs.neptune.ai/python-api/tutorials/get-started.html#copy-api-token.

project_name – Required in online mode. Qualified name of a project in a form of “namespace/project_name” for example “tom/minst-classification”. If None, the value of NEPTUNE_PROJECT environment variable will be taken. You need to create the project in https://neptune.ai first.

offline_mode – Optional default False. If offline_mode=True no logs will be send to neptune. Usually used for debug purposes.

experiment_name – Optional. Editable name of the experiment. Name is displayed in the experiment’s Details (Metadata section) and in experiments view as a column.

upload_source_files – Optional. List of source files to be uploaded. Must be list of str or single str. Uploaded sources are displayed in the experiment’s Source code tab. If None is passed, Python file from which experiment was created will be uploaded. Pass empty list ([]) to upload no files. Unix style pathname pattern expansion is supported. For example, you can pass *.py to upload all python source files from the current directory. For recursion lookup use **/*.py (for Python 3.5 and later). For more information see glob library.

params – Optional. Parameters of the experiment. After experiment creation params are read-only. Parameters are displayed in the experiment’s Parameters section and each key-value pair can be viewed in experiments view as a column.

properties – Optional default is {}. Properties of the experiment. They are editable after experiment is created. Properties are displayed in the experiment’s Details and each key-value pair can be viewed in experiments view as a column.

tags – Optional default []. Must be list of str. Tags of the experiment. Tags are displayed in the experiment’s Details and can be viewed in experiments view as a column.

args (Any) –

kwargs (Any) –

Examples

from ignite.contrib.handlers.neptune_logger import * # Create a logger # We are using the api_token for the anonymous user neptuner but you can use your own. npt_logger = NeptuneLogger( api_token="ANONYMOUS", project_name="shared/pytorch-ignite-integration", experiment_name="cnn-mnist", # Optional, params={"max_epochs": 10}, # Optional, tags=["pytorch-ignite","minst"] # Optional ) # Attach the logger to the trainer to log training loss at each iteration npt_logger.attach_output_handler( trainer, event_name=Events.ITERATION_COMPLETED, tag="training", output_transform=lambda loss: {'loss': loss} ) # Attach the logger to the evaluator on the training dataset and log NLL, Accuracy metrics after each epoch # We setup `global_step_transform=global_step_from_engine(trainer)` to take the epoch # of the `trainer` instead of `train_evaluator`. npt_logger.attach_output_handler( train_evaluator, event_name=Events.EPOCH_COMPLETED, tag="training", metric_names=["nll", "accuracy"], global_step_transform=global_step_from_engine(trainer), ) # Attach the logger to the evaluator on the validation dataset and log NLL, Accuracy metrics after # each epoch. We setup `global_step_transform=global_step_from_engine(trainer)` to take the epoch of the # `trainer` instead of `evaluator`. npt_logger.attach_output_handler( evaluator, event_name=Events.EPOCH_COMPLETED, tag="validation", metric_names=["nll", "accuracy"], global_step_transform=global_step_from_engine(trainer)), ) # Attach the logger to the trainer to log optimizer's parameters, e.g. learning rate at each iteration npt_logger.attach_opt_params_handler( trainer, event_name=Events.ITERATION_STARTED, optimizer=optimizer, param_name='lr' # optional ) # Attach the logger to the trainer to log model's weights norm after each iteration npt_logger.attach( trainer, event_name=Events.ITERATION_COMPLETED, log_handler=WeightsScalarHandler(model) )

Explore an experiment with neptune tracking here: https://ui.neptune.ai/o/shared/org/pytorch-ignite-integration/e/PYTOR1-18/charts You can save model checkpoints to a Neptune server:

from ignite.handlers import Checkpoint def score_function(engine): return engine.state.metrics["accuracy"] to_save = {"model": model} handler = Checkpoint( to_save, NeptuneSaver(npt_logger), n_saved=2, filename_prefix="best", score_function=score_function, score_name="validation_accuracy", global_step_transform=global_step_from_engine(trainer) ) validation_evaluator.add_event_handler(Events.COMPLETED, handler)

It is also possible to use the logger as context manager:

from ignite.contrib.handlers.neptune_logger import * # We are using the api_token for the anonymous user neptuner but you can use your own. with NeptuneLogger(api_token="ANONYMOUS", project_name="shared/pytorch-ignite-integration", experiment_name="cnn-mnist", # Optional, params={"max_epochs": 10}, # Optional, tags=["pytorch-ignite","minst"] # Optional ) as npt_logger: trainer = Engine(update_fn) # Attach the logger to the trainer to log training loss at each iteration npt_logger.attach_output_handler( trainer, event_name=Events.ITERATION_COMPLETED, tag="training", output_transform=lambda loss: {"loss": loss} )

- attach(engine, log_handler, event_name)

Attach the logger to the engine and execute log_handler function at event_name events.

- Parameters

engine (Engine) – engine object.

log_handler (Callable) – a logging handler to execute

event_name (Union[str, Events, CallableEventWithFilter, EventsList]) – event to attach the logging handler to. Valid events are from

EventsorEventsListor any event_name added byregister_events().

- Returns

RemovableEventHandle, which can be used to remove the handler.- Return type

- attach_opt_params_handler(engine, event_name, *args, **kwargs)

Shortcut method to attach OptimizerParamsHandler to the logger.

- Parameters

- Returns

RemovableEventHandle, which can be used to remove the handler.- Return type

Changed in version 0.4.3: Added missing return statement.

- attach_output_handler(engine, event_name, *args, **kwargs)

Shortcut method to attach OutputHandler to the logger.

- Parameters

- Returns

RemovableEventHandle, which can be used to remove the handler.- Return type

- class ignite.contrib.handlers.neptune_logger.NeptuneSaver(neptune_logger)[source]

Handler that saves input checkpoint to the Neptune server.

- Parameters

neptune_logger (NeptuneLogger) – an instance of NeptuneLogger class.

Examples

from ignite.contrib.handlers.neptune_logger import * # Create a logger # We are using the api_token for the anonymous user neptuner but you can use your own. npt_logger = NeptuneLogger( api_token="ANONYMOUS", project_name="shared/pytorch-ignite-integration", experiment_name="cnn-mnist", # Optional, params={"max_epochs": 10}, # Optional, tags=["pytorch-ignite","minst"] # Optional ) ... evaluator = create_supervised_evaluator(model, metrics=metrics, ...) ... from ignite.handlers import Checkpoint def score_function(engine): return engine.state.metrics["accuracy"] to_save = {"model": model} # pass neptune logger to NeptuneServer handler = Checkpoint( to_save, NeptuneSaver(npt_logger), n_saved=2, filename_prefix="best", score_function=score_function, score_name="validation_accuracy", global_step_transform=global_step_from_engine(trainer) ) evaluator.add_event_handler(Events.COMPLETED, handler) # We need to close the logger when we are done npt_logger.close()

For example, you can access model checkpoints and download them from here: https://ui.neptune.ai/o/shared/org/pytorch-ignite-integration/e/PYTOR1-18/charts

- class ignite.contrib.handlers.neptune_logger.OptimizerParamsHandler(optimizer, param_name='lr', tag=None)[source]

Helper handler to log optimizer parameters

Examples

from ignite.contrib.handlers.neptune_logger import * # Create a logger # We are using the api_token for the anonymous user neptuner but you can use your own. npt_logger = NeptuneLogger( api_token="ANONYMOUS", project_name="shared/pytorch-ignite-integration", experiment_name="cnn-mnist", # Optional, params={"max_epochs": 10}, # Optional, tags=["pytorch-ignite","minst"] # Optional ) # Attach the logger to the trainer to log optimizer's parameters, e.g. learning rate at each iteration npt_logger.attach( trainer, log_handler=OptimizerParamsHandler(optimizer), event_name=Events.ITERATION_STARTED ) # or equivalently npt_logger.attach_opt_params_handler( trainer, event_name=Events.ITERATION_STARTED, optimizer=optimizer )

- class ignite.contrib.handlers.neptune_logger.OutputHandler(tag, metric_names=None, output_transform=None, global_step_transform=None)[source]

Helper handler to log engine’s output and/or metrics

Examples

from ignite.contrib.handlers.neptune_logger import * # Create a logger # We are using the api_token for the anonymous user neptuner but you can use your own. npt_logger = NeptuneLogger( api_token="ANONYMOUS", project_name="shared/pytorch-ignite-integration", experiment_name="cnn-mnist", # Optional, params={"max_epochs": 10}, # Optional, tags=["pytorch-ignite","minst"] # Optional ) # Attach the logger to the evaluator on the validation dataset and log NLL, Accuracy metrics after # each epoch. We setup `global_step_transform=global_step_from_engine(trainer)` to take the epoch # of the `trainer`: npt_logger.attach( evaluator, log_handler=OutputHandler( tag="validation", metric_names=["nll", "accuracy"], global_step_transform=global_step_from_engine(trainer) ), event_name=Events.EPOCH_COMPLETED ) # or equivalently npt_logger.attach_output_handler( evaluator, event_name=Events.EPOCH_COMPLETED, tag="validation", metric_names=["nll", "accuracy"], global_step_transform=global_step_from_engine(trainer) )

Another example, where model is evaluated every 500 iterations:

from ignite.contrib.handlers.neptune_logger import * @trainer.on(Events.ITERATION_COMPLETED(every=500)) def evaluate(engine): evaluator.run(validation_set, max_epochs=1) # We are using the api_token for the anonymous user neptuner but you can use your own. npt_logger = NeptuneLogger( api_token="ANONYMOUS", project_name="shared/pytorch-ignite-integration", experiment_name="cnn-mnist", # Optional, params={"max_epochs": 10}, # Optional, tags=["pytorch-ignite", "minst"] # Optional ) def global_step_transform(*args, **kwargs): return trainer.state.iteration # Attach the logger to the evaluator on the validation dataset and log NLL, Accuracy metrics after # every 500 iterations. Since evaluator engine does not have access to the training iteration, we # provide a global_step_transform to return the trainer.state.iteration for the global_step, each time # evaluator metrics are plotted on NeptuneML. npt_logger.attach_output_handler( evaluator, event_name=Events.EPOCH_COMPLETED, tag="validation", metrics=["nll", "accuracy"], global_step_transform=global_step_transform )

- Parameters

tag (str) – common title for all produced plots. For example, “training”

metric_names (Optional[Union[str, List[str]]]) – list of metric names to plot or a string “all” to plot all available metrics.

output_transform (Optional[Callable]) – output transform function to prepare engine.state.output as a number. For example, output_transform = lambda output: output This function can also return a dictionary, e.g {“loss”: loss1, “another_loss”: loss2} to label the plot with corresponding keys.

global_step_transform (Optional[Callable]) – global step transform function to output a desired global step. Input of the function is (engine, event_name). Output of function should be an integer. Default is None, global_step based on attached engine. If provided, uses function output as global_step. To setup global step from another engine, please use

global_step_from_engine().

Note

Example of global_step_transform:

def global_step_transform(engine, event_name): return engine.state.get_event_attrib_value(event_name)

- class ignite.contrib.handlers.neptune_logger.WeightsScalarHandler(model, reduction=<function norm>, tag=None)[source]

Helper handler to log model’s weights as scalars. Handler iterates over named parameters of the model, applies reduction function to each parameter produce a scalar and then logs the scalar.

Examples

from ignite.contrib.handlers.neptune_logger import * # Create a logger # We are using the api_token for the anonymous user neptuner but you can use your own. npt_logger = NeptuneLogger( api_token="ANONYMOUS", project_name="shared/pytorch-ignite-integration", experiment_name="cnn-mnist", # Optional, params={"max_epochs": 10}, # Optional, tags=["pytorch-ignite","minst"] # Optional ) # Attach the logger to the trainer to log model's weights norm after each iteration npt_logger.attach( trainer, event_name=Events.ITERATION_COMPLETED, log_handler=WeightsScalarHandler(model, reduction=torch.norm) )

- ignite.contrib.handlers.neptune_logger.global_step_from_engine(engine, custom_event_name=None)[source]

Helper method to setup global_step_transform function using another engine. This can be helpful for logging trainer epoch/iteration while output handler is attached to an evaluator.

mlflow_logger

MLflow tracking client handler to log parameters and metrics during the training and validation. |

|

Helper handler to log engine's output and/or metrics. |

|

Helper handler to log optimizer parameters |

|

Helper method to setup global_step_transform function using another engine. |

- class ignite.contrib.handlers.mlflow_logger.MLflowLogger(tracking_uri=None)[source]

MLflow tracking client handler to log parameters and metrics during the training and validation.

This class requires mlflow package to be installed:

pip install mlflow

Examples

from ignite.contrib.handlers.mlflow_logger import * # Create a logger mlflow_logger = MLflowLogger() # Log experiment parameters: mlflow_logger.log_params({ "seed": seed, "batch_size": batch_size, "model": model.__class__.__name__, "pytorch version": torch.__version__, "ignite version": ignite.__version__, "cuda version": torch.version.cuda, "device name": torch.cuda.get_device_name(0) }) # Attach the logger to the trainer to log training loss at each iteration mlflow_logger.attach_output_handler( trainer, event_name=Events.ITERATION_COMPLETED, tag="training", output_transform=lambda loss: {'loss': loss} ) # Attach the logger to the evaluator on the training dataset and log NLL, Accuracy metrics after each epoch # We setup `global_step_transform=global_step_from_engine(trainer)` to take the epoch # of the `trainer` instead of `train_evaluator`. mlflow_logger.attach_output_handler( train_evaluator, event_name=Events.EPOCH_COMPLETED, tag="training", metric_names=["nll", "accuracy"], global_step_transform=global_step_from_engine(trainer), ) # Attach the logger to the evaluator on the validation dataset and log NLL, Accuracy metrics after # each epoch. We setup `global_step_transform=global_step_from_engine(trainer)` to take the epoch of the # `trainer` instead of `evaluator`. mlflow_logger.attach_output_handler( evaluator, event_name=Events.EPOCH_COMPLETED, tag="validation", metric_names=["nll", "accuracy"], global_step_transform=global_step_from_engine(trainer)), ) # Attach the logger to the trainer to log optimizer's parameters, e.g. learning rate at each iteration mlflow_logger.attach_opt_params_handler( trainer, event_name=Events.ITERATION_STARTED, optimizer=optimizer, param_name='lr' # optional )

- attach(engine, log_handler, event_name)

Attach the logger to the engine and execute log_handler function at event_name events.

- Parameters

engine (Engine) – engine object.

log_handler (Callable) – a logging handler to execute

event_name (Union[str, Events, CallableEventWithFilter, EventsList]) – event to attach the logging handler to. Valid events are from

EventsorEventsListor any event_name added byregister_events().

- Returns

RemovableEventHandle, which can be used to remove the handler.- Return type

- attach_opt_params_handler(engine, event_name, *args, **kwargs)

Shortcut method to attach OptimizerParamsHandler to the logger.

- Parameters

- Returns

RemovableEventHandle, which can be used to remove the handler.- Return type

Changed in version 0.4.3: Added missing return statement.

- attach_output_handler(engine, event_name, *args, **kwargs)

Shortcut method to attach OutputHandler to the logger.

- Parameters

- Returns

RemovableEventHandle, which can be used to remove the handler.- Return type

- class ignite.contrib.handlers.mlflow_logger.OptimizerParamsHandler(optimizer, param_name='lr', tag=None)[source]

Helper handler to log optimizer parameters

Examples

from ignite.contrib.handlers.mlflow_logger import * # Create a logger mlflow_logger = MLflowLogger() # Optionally, user can specify tracking_uri with corresponds to MLFLOW_TRACKING_URI # mlflow_logger = MLflowLogger(tracking_uri="uri") # Attach the logger to the trainer to log optimizer's parameters, e.g. learning rate at each iteration mlflow_logger.attach( trainer, log_handler=OptimizerParamsHandler(optimizer), event_name=Events.ITERATION_STARTED ) # or equivalently mlflow_logger.attach_opt_params_handler( trainer, event_name=Events.ITERATION_STARTED, optimizer=optimizer )

- class ignite.contrib.handlers.mlflow_logger.OutputHandler(tag, metric_names=None, output_transform=None, global_step_transform=None)[source]

Helper handler to log engine’s output and/or metrics.

Examples

from ignite.contrib.handlers.mlflow_logger import * # Create a logger mlflow_logger = MLflowLogger() # Attach the logger to the evaluator on the validation dataset and log NLL, Accuracy metrics after # each epoch. We setup `global_step_transform=global_step_from_engine(trainer)` to take the epoch # of the `trainer`: mlflow_logger.attach( evaluator, log_handler=OutputHandler( tag="validation", metric_names=["nll", "accuracy"], global_step_transform=global_step_from_engine(trainer) ), event_name=Events.EPOCH_COMPLETED ) # or equivalently mlflow_logger.attach_output_handler( evaluator, event_name=Events.EPOCH_COMPLETED, tag="validation", metric_names=["nll", "accuracy"], global_step_transform=global_step_from_engine(trainer) )

Another example, where model is evaluated every 500 iterations:

from ignite.contrib.handlers.mlflow_logger import * @trainer.on(Events.ITERATION_COMPLETED(every=500)) def evaluate(engine): evaluator.run(validation_set, max_epochs=1) mlflow_logger = MLflowLogger() def global_step_transform(*args, **kwargs): return trainer.state.iteration # Attach the logger to the evaluator on the validation dataset and log NLL, Accuracy metrics after # every 500 iterations. Since evaluator engine does not have access to the training iteration, we # provide a global_step_transform to return the trainer.state.iteration for the global_step, each time # evaluator metrics are plotted on MLflow. mlflow_logger.attach_output_handler( evaluator, event_name=Events.EPOCH_COMPLETED, tag="validation", metrics=["nll", "accuracy"], global_step_transform=global_step_transform )

- Parameters

tag (str) – common title for all produced plots. For example, ‘training’

metric_names (Optional[Union[str, List[str]]]) – list of metric names to plot or a string “all” to plot all available metrics.

output_transform (Optional[Callable]) – output transform function to prepare engine.state.output as a number. For example, output_transform = lambda output: output This function can also return a dictionary, e.g {‘loss’: loss1, ‘another_loss’: loss2} to label the plot with corresponding keys.

global_step_transform (Optional[Callable]) – global step transform function to output a desired global step. Input of the function is (engine, event_name). Output of function should be an integer. Default is None, global_step based on attached engine. If provided, uses function output as global_step. To setup global step from another engine, please use

global_step_from_engine().

Note

Example of global_step_transform:

def global_step_transform(engine, event_name): return engine.state.get_event_attrib_value(event_name)

- ignite.contrib.handlers.mlflow_logger.global_step_from_engine(engine, custom_event_name=None)[source]

Helper method to setup global_step_transform function using another engine. This can be helpful for logging trainer epoch/iteration while output handler is attached to an evaluator.

tqdm_logger

See tqdm mnist example for detailed usage.

TQDM progress bar handler to log training progress and computed metrics. |

- class ignite.contrib.handlers.tqdm_logger.ProgressBar(persist=False, bar_format='{desc}[{n_fmt}/{total_fmt}] {percentage:3.0f}%|{bar}{postfix} [{elapsed}<{remaining}]', **tqdm_kwargs)[source]

TQDM progress bar handler to log training progress and computed metrics.

- Parameters

persist (bool) – set to

Trueto persist the progress bar after completion (default =False)bar_format (str) – Specify a custom bar string formatting. May impact performance. [default: ‘{desc}[{n_fmt}/{total_fmt}] {percentage:3.0f}%|{bar}{postfix} [{elapsed}<{remaining}]’]. Set to

Noneto usetqdmdefault bar formatting: ‘{l_bar}{bar}{r_bar}’, where l_bar=’{desc}: {percentage:3.0f}%|’ and r_bar=’| {n_fmt}/{total_fmt} [{elapsed}<{remaining}, {rate_fmt}{postfix}]’. For more details on the formatting, see tqdm docs.tqdm_kwargs (Any) – kwargs passed to tqdm progress bar. By default, progress bar description displays “Epoch [5/10]” where 5 is the current epoch and 10 is the number of epochs; however, if

max_epochsare set to 1, the progress bar instead displays “Iteration: [5/10]”. If tqdm_kwargs defines desc, e.g. “Predictions”, than the description is “Predictions [5/10]” if number of epochs is more than one otherwise it is simply “Predictions”.

Examples

Simple progress bar

trainer = create_supervised_trainer(model, optimizer, loss) pbar = ProgressBar() pbar.attach(trainer) # Progress bar will looks like # Epoch [2/50]: [64/128] 50%|█████ [06:17<12:34]

Log output to a file instead of stderr (tqdm’s default output)

trainer = create_supervised_trainer(model, optimizer, loss) log_file = open("output.log", "w") pbar = ProgressBar(file=log_file) pbar.attach(trainer)

Attach metrics that already have been computed at

ITERATION_COMPLETED(such asRunningAverage)trainer = create_supervised_trainer(model, optimizer, loss) RunningAverage(output_transform=lambda x: x).attach(trainer, 'loss') pbar = ProgressBar() pbar.attach(trainer, ['loss']) # Progress bar will looks like # Epoch [2/50]: [64/128] 50%|█████ , loss=0.123 [06:17<12:34]

Directly attach the engine’s output

trainer = create_supervised_trainer(model, optimizer, loss) pbar = ProgressBar() pbar.attach(trainer, output_transform=lambda x: {'loss': x}) # Progress bar will looks like # Epoch [2/50]: [64/128] 50%|█████ , loss=0.123 [06:17<12:34]

Note

When adding attaching the progress bar to an engine, it is recommend that you replace every print operation in the engine’s handlers triggered every iteration with

pbar.log_messageto guarantee the correct format of the stdout.Note

When using inside jupyter notebook, ProgressBar automatically uses tqdm_notebook. For correct rendering, please install ipywidgets. Due to tqdm notebook bugs, bar format may be needed to be set to an empty string value.

- attach(engine, metric_names=None, output_transform=None, event_name=Events.ITERATION_COMPLETED, closing_event_name=Events.EPOCH_COMPLETED)[source]

Attaches the progress bar to an engine object.

- Parameters

engine (Engine) – engine object.

metric_names (Optional[str]) – list of metric names to plot or a string “all” to plot all available metrics.

output_transform (Optional[Callable]) – a function to select what you want to print from the engine’s output. This function may return either a dictionary with entries in the format of

{name: value}, or a single scalar, which will be displayed with the default name output.event_name (Union[Events, CallableEventWithFilter]) – event’s name on which the progress bar advances. Valid events are from

Events.closing_event_name (Union[Events, CallableEventWithFilter]) – event’s name on which the progress bar is closed. Valid events are from

Events.

- Return type

None

Note

Accepted output value types are numbers, 0d and 1d torch tensors and strings.

- attach_opt_params_handler(engine, event_name, *args, **kwargs)[source]

Intentionally empty

polyaxon_logger

Polyaxon tracking client handler to log parameters and metrics during the training and validation. |

|

Helper handler to log engine's output and/or metrics. |

|

Helper handler to log optimizer parameters |

|

Helper method to setup global_step_transform function using another engine. |

- class ignite.contrib.handlers.polyaxon_logger.OptimizerParamsHandler(optimizer, param_name='lr', tag=None)[source]

Helper handler to log optimizer parameters

Examples